The Hom Group

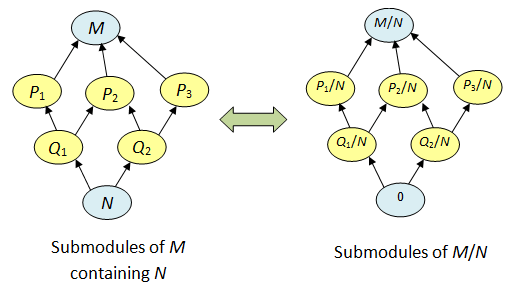

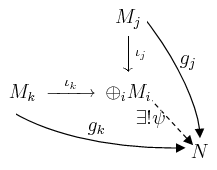

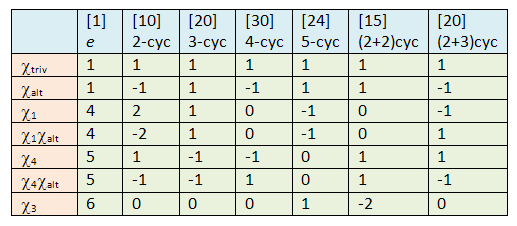

Continuing from the previous installation, here’s another way of writing the universal properties for direct sums and products. Let Hom(M, N) be the set of all module homomorphisms M → N; then:

(*)

for any R-module N.

In the case where there’re finitely many Mi‘s, the direct product and direct sum are identical, so we get:

(**)

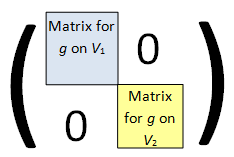

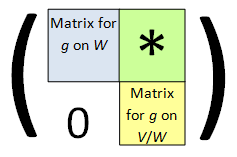

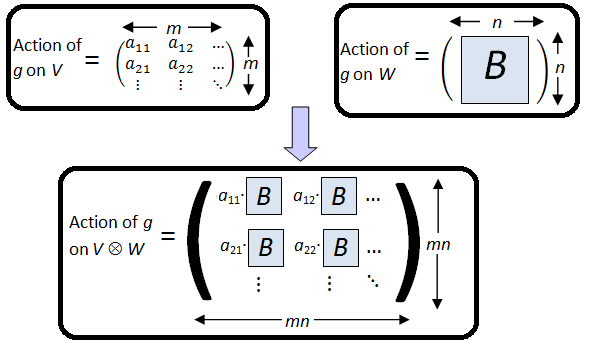

This correspondence is extremely important. One can write this as a matrix form: can be broken up as follows

, where

and

In fact, there’s more to the correspondence (**) than a mere bijection of sets:

Proposition. The set Hom(M, N) forms an abelian group; if

are module homomorphisms, then we define:

The identity is given by f(m) = 0 for all m; it is also denoted by

Since the proof is straightforward, we’ll leave it as a simple exercise. The bijections in (*) and (**) are thus isomorphisms of abelian groups.

Free Modules

First we define:

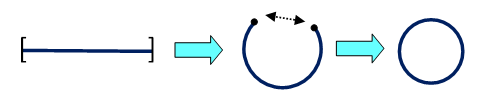

Definition. Let I be an index set. The free module on I is the direct sum of copies of R, indexed by elements of I:

In contrast, the direct product of copies of R is given by

.

You might wonder why we’re interested in direct sum and not the product; it’s because of its universal property.

From the correspondence in (*), we obtain:

On the other hand, it’s easy to see that can be identified with M itself. Indeed, if f : R → M is a module homomorphism, then it’s identified uniquely by the image f(1), from which we get f(r) = f(r·1) = r·f(1) for any

On the other hand, any element

corresponds to the homomorphism

which satisfies

Thus we get a natural group isomorphism between Hom(R, M) and M.

In fact, we can say this is an isomorphism of R-modules, if we define an R-module structure on Hom(R, M) by letting act on f via

To check that this makes sense,

takes r’ to

which is the image of

acting on r’.

Thus, we get:

where the RHS is a direct product. Thus, the free module satisfies the following universal property:

Universal Property of Free Modules. There is a 1-1 correspondence between module homomorphisms

and elements of the direct product

Rank of Free Modules

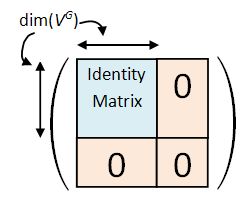

Finite free modules (i.e. free modules where I is finite) are particularly nice since the linear maps between them are represented by matrices: from (**),

as an m × n matrix.

Composition of linear maps and

then corresponds to the product of an k × m matrix and m × n matrix. Before we proceed though, we’d like to ask a more fundamental question:

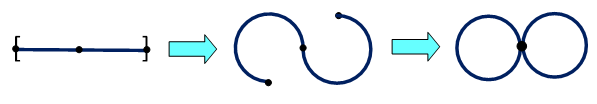

Question. If

, then we call #I the rank of M. Is the rank well-defined? E.g. is it possible for

to occur as R-modules?

This turns out to be a rather difficult problem, since the answer is (no, yes), when phrased in the most general setting. In other words, there exist rings R for which (and hence

for all n>0) as R-modules! Generally, rings for which

are said to satisfy the Invariant Basis Number property. There has been much study done on this but for now, we’ll contend ourselves with the following special cases:

- Division rings (and hence fields) satisfy IBN.

- Non-trivial commutative rings satisfy IBN.

The second case follows from the first if we’re allowed to use a standard result in commutative algebra: every non-trivial commutative ring R ≠ 1 has a maximal ideal I. Assuming this, if , then taking the quotient module

gives the isomorphism

where

is in fact an isomorphism of (R/I)-modules. Since R/I is a field, the first case tells us m=n.

The case of division rings will be proven later.

Basis of Free Module

Free modules are probably the most well-behaved types of modules since many of the results in standard linear algebra carry over, e.g. the presence of a basis.

Definition. A subset S of module M is said to be linearly independent if whenever

and

satisfy:

,

we have

.

Definition. A subset S of M is said to be a basis if it is linearly independent and generates the module M.

Clearly, a subset of a linearly independent set is linearly independent as well. On the other hand, a superset of a generating set also generates the module. Thus, the basis lies as a fine balance between the two cases.

A free module has a standard basis

which is indexed by

Let

be the element:

For example, if I = {1, 2, 3}, the standard basis is given by

which is hardly surprising if you’ve done linear algebra before.

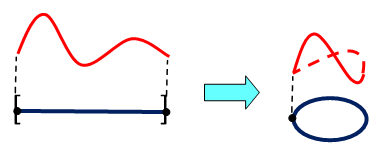

Conversely, any module with a basis is free.

Proposition. If

is a basis, with elements indexed by

, then there’s an isomorphism:

, which takes

Sketch of Proof.

First note that the RHS sum is well-defined since there’re only finitely many non-zero terms for ri. The map is also clearly R-linear. The fact that it’s surjective is precisely the condition that the mi‘s generate M. Also it’s injective if and only if the kernel is 0, which is exactly the condition that S is linearly independent. ♦

In conclusion:

Corollary. An R-module is free if and only if it has a basis.

Clearly, not all R-modules have a basis. This is amply clear even for the case R=Z, since the finite Z-module (i.e. abelian group) Z/2 has no basis. On the other hand, for R=Z, every submodule of a free module is free. This does not hold for a general ring, e.g. for R = R[x, y], the ring of polynomials in x, y with real coefficients, the ideal <x, y> is a submodule which is not free since any two elements are linearly dependent.

Finally, the astute reader who had any exposure to linear algebra would not be surprised to see the following.

Theorem. Every module over a division ring has a basis, and the cardinality of the basis (i.e. the rank of the module) is well-defined.

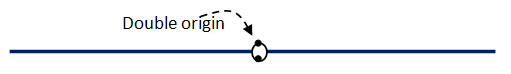

Question.

Let be the standard basis for the free module

. Why is it not a basis for the direct product

?

Linear Algebra Over Division Rings.

Let R be a division ring D for the remaining of this article. A D-module will henceforth be known as a vector space over D, in accordance with linear algebra.

Theorem (Existence of Basis). Every vector space over D has a basis. Specifically, if

are subsets such that S is linearly independent and T is a generating set, then there’s a basis B such that

[ In particular, any linearly independent subset can be extended to a basis and any generating set has a subset which is a basis. ]

This gist of the proof is to keep adding elements to S while keeping it linearly independent, until one can’t add anymore. This will result in a basis.

Proof.

First, establish the groundwork for Zorn’s lemma.

- Let Σ be the class of all linearly independent sets U where

Now Σ is not empty since

at least.

- Partially order Σ by inclusion.

- If

is a chain (i.e. for any a, b we have

or

), then the union

is also linearly independent and

- Indeed, U is linearly independent because any linear dependency

would involve only finitely many terms

, so all these terms would come from a single Ua, thus violating its linear independence.

- Indeed, U is linearly independent because any linear dependency

Hence, Zorn’s lemma tells us there’s a maximal linearly independent U among all We claim U generates M: if not, then <U> doesn’t contain T, for if it did, it would also contain <T> = M. Thus, we can pick

and let

We claim U’ is linearly independent: indeed, if

for

and

then r ≠ 0 since U is linearly independent and thus we can write:

which is a contradiction. Hence, U’ is a linearly independent set strictly containing U and contained in T, which violates the maximality of U. Conclusion: U is a basis. ♦

Theorem (Uniqueness of Rank). If

, then I and J have the same cardinality.

The gist of the proof is to replace elements of one basis with another, and show that there’s an injection I → J.

Proof.

Let and

be bases of M, corresponding to the above isomorphisms.

- Take the class Σ of all injections

such that

is a basis of M.

- Now Σ is not empty since it contains

.

- Partially order Σ as follows: (φ: S → J) ≤ (φ’: S’ → J) if and only if

and

- Show that if

is a chain in Σ, then one can take the “union”

where

for any a such that

Hence, Zorn’s lemma applies and there’s a maximal . We claim S = I. If not, pick

Since

is a basis of M, write:

for some

.

Since the ei‘s are linearly independent, the second sum is non-empty so pick any j’ for which We get:

Hence, we can extend to

by taking k to j’ and contradict the maximality of φ. Conclusion: there’s an injection I → J, and by symmetry, there’s an injection J → I as well. By the Cantor–Bernstein–Schroeder theorem, I and J have the same cardinality. ♦

The dimension of a vector space over a division ring is thus defined to be the cardinality of any basis. It is a well-defined value.