Polynomial Rings

A polynomial over a ring R is an expression of the form:

, where

, and

.

Let’s get some standard terminology out of the way. The element ai is called the coefficient of xi. The largest n for which an ≠ 0 is called the degree of f. [For the zero polynomial, it’s convenient to denote deg=-∞. ] If deg(f)=n, we call the coefficient of xn the leading coefficient of f(x). If the leading coefficient is 1, we say the polynomial is monic.

Important: we should think of f(x) as a symbolic expression and not a function, for now, because there’re some dangers lurking underneath the functional interpretation of f.

For starters, we have the following result:

For starters, we have the following result:

Theorem. The set of polynomials over R, denoted R[x], is a ring. The addition and product operations are the standard polynomial addition and product: let

and

; then

, where

;

, where

.

The unity 1 is just, well, 1 (i.e. 1 + 0x + 0x2 + … ). The proof is easy but a bit tedious, so we’re leaving it to the reader as an exercise. Note that R[x] is a commutative ring if and only if R is commutative.

The first danger of the day is that deg(fg) ≠ deg(f)deg(g) if R has zero-divisors. Indeed, if deg(f)=m and deg(g)=n, then the coefficient of xm+n is ambn which can be zero even if am and bn are not.

Proposition. If R has no non-zero zero-divisors, then deg(fg) = deg(f) + deg(g) for any polynomials f, g.

Our next definition is a natural extension from the case of real polynomials.

Definition. The derivative of f(x), denoted

or just f'(x), is given by:

Once again, the derivatives are formal derivatives, in the sense that we’re not thinking in terms of calculus and limits. Our derivative is merely a function which takes a polynomial and outputs another. The usual properties regarding derivatives of sums and products still hold.

;

;

Do take note of the order of multiplication in the product law since the ring may well be non-commutative. Once again, the proof is left to the reader, but we’ll leave a hint to make his/her job easier. For the product law, note that the maps

and

are both bilinear in f and g. In other words, if we fix f (resp. g), then the resulting map is linear in g (resp. f). This reduces to the case of monomials ,

.

Now, the next bombshell is: in general, even for commutative rings, in fact, even for fields. Note that if the leading coefficient of f is

, then its derivative is

, which can be zero even if an ≠ 0.

E.g. let R = Z/3 which is a finite field. Then the polynomial has derivative

since 3 = 3·1 = 0 in the ring R.

In general, we merely have .

Polynomials as Functions

Polynomials as Functions

Any student of elementary algebra knows that we can substitute values in x to evaluate a polynomial. This holds for too.

Problem 1: product is not preserved if R is non-commutative.

We prefer not to do it when R is not commutative, because if we substitute x=r, then it’s not true in general that (fg)(r) = f(r)g(r).

E.g. consider the quaternion ring H. Suppose f(x) = ix and g(x) = jx. Then fg = (ij)x2 = kx2. This gives:

f(i) = -1, g(i) = –k but (fg)(i) = –k ≠ f(i)g(i).

The problem occurs because x=r does not commute with the coefficients of f(x), so we won’t attempt to interpret f as a function when the coefficients don’t commute.

Problem 2 : a non-zero polynomial can be a zero function.

This is best given by the example of R = Z/3. Take the polynomial f(x) = x3–x. The only elements of R are {0, 1, 2} mod 3, and all these satisfy f(0) = f(1) = f(2) = 0. More generally, if p is a prime, then the polynomial f(x) = xp–x over the ring Z/p becomes the zero function by Fermat’s little theorem.

With those caveats out of the way, let’s state the main theorem of this section.

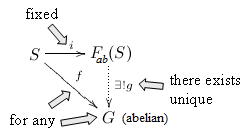

Theorem. Let R be a commutative ring. The evaluation map gives a ring homomorphism:

where Func(R, R) is the set of functions R → R with addition and product given by: for all

,

;

.

The unity 1 is the constant function which takes all r to 1.

We’ve already seen that the evaluation map is not injective in general. Clearly, it’s also far from surjective.

Remainder Theorem

Remainder Theorem

Throughout this section, let’s assume R is commutative.

Theorem. Let

, where g(x) is monic (recall: this means leading coeff = 1). Then we can write:

,

for some

such that deg(r) < deg(g) (this includes g=0, whose degree is -∞).

Furthermore, q(x) and r(x) are unique, and we them the quotient and remainder of f(x) ÷ g(x) respectively.

We’ll sketch the proof: which is almost identical to the case of secondary school algebra.

- Case 1: deg(f) < deg(g). The only possibility is q(x)=0, r(x)=f(x), because if q≠0, then deg(gq) = deg(g)+deg(q) ≥ deg(g) > deg(r) (important: the first equality holds because g is monic!) which gives deg(f) = deg(gq+r) = deg(gq). But deg(gq) ≥ deg(g) so this contradicts the case assumption.

- Case 2: deg(f) ≥ deg(g). Let

, where n = deg(f). Show that the leading coefficient of q(x) must be

, where m = deg(g). Then apply induction on deg(f).

In particular, suppose g(x) = x – c for some c in R. Then f(x) = q(x)(x – c) + r, where r is a constant. Substitute x=c to obtain f(c) = r, so we have:

Remainder Theorem. If

and

, then we have:

,

for some

. As a corollary, we get the Factor Theorem : if f(c) = 0, then f(x) = (x-c)q(x) for some polynomial q(x).

[ An element c of R is called a root of f(x) if f(c) = 0. ]

Here’s another way of looking at it. Fixing c, we get a surjective ring homomorphism R[x] → R which takes f(x) → f(c). Then the factor theorem says the kernel of this map is precisely <x–c>.

Example 1

Consider the ring R[x, y, z] of all polynomials with real coefficients in x, y, z. This is (canonically isomorphic to) the ring of polynomials R[z], where R = R[x, y]. Now let (a, b, c) be an ordered triplet of real numbers.

- The remainder theorem for R = R[x, y] gives us f(x, y, z) = (z–c)q(x, y, z) + r(x, y).

- Applying remainder theorem to R = R[x] gives us r(x, y) = (y–b)q2(x, y) + r2(x).

- Finally, applying it to R = R gives r2(x) = (x–a)q3(x) + r3.

In conclusion:

and upon substituting x=a, y=b, z=c, we get r3 = f(a, b, c). In short, the ring homomorphism R[x, y, z] → R which takes f(x, y, z) to a real number by evaluating it at (a, b, c) has ideal I = <x–a, y–b, z–c>. Since the quotient R[x, y, z]/I is isomorphic to R by the first isomorphism theorem, I is maximal.

Example 2

Suppose R is an integral domain and is of degree n. Denote the distinct roots of f by

. We shall prove that m ≤ n.

Indeed, since , by the Factor Theorem,

. Now for every other root,

, we have

. Since the ri‘s are distinct, ri–rm ≠ 0. Since R is an integral domain, this implies

are also roots of g(x). Since deg(g) = deg(f) – 1, apply induction.

If R has zero-divisors, things can go very wrong. E.g. in R × R, the innocuous looking quadratic equation x2=x has 4 solutions: (0, 0), (0, 1), (1, 0) and (1, 1).

Omake: Shamir’s Secret Sharing Scheme

Omake: Shamir’s Secret Sharing Scheme

Polynomials in abstract ring theory are a key ingredient in Shamir’s secret sharing protocol. The problem is as follows: you have a secret code (e.g. 5-digit number) to a safe containing some highly sensitive documents. You’d like to distribute the code among 10 scientists so that:

- any 3 scientists can cooperate and obtain the code, but

- if any 2 scientists cooperate, they get absolutely no information on the code.

The second factor is critical: not only do the 2 scientists not know the full code, but the amount of knowledge they have pertaining to the code is zilch! In short, cutting up the code into binary bits and distributing them is out of the question, since each scientists would then know something about the code (each bit cuts the number of possibilities by half).

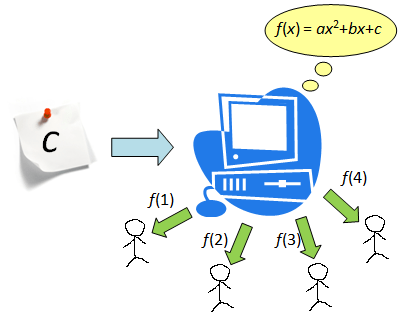

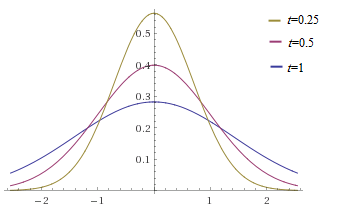

Shamir’s ingenious solution is as follows: pick a reasonably large prime p and take the finite field R = Z/p. Now:

- The computer randomly picks a polynomial

such that f(0) = c = secret code. The case of a=0 is not excluded.

- For i = 1, 2, …, 10, the computer then calculates f(i) and sends it to scientist #i.

- If any three scientists cooperate, they would have f(i), f(j), f(k) for three distinct positive integers i, j, k, and we know that every quadratic equation is uniquely determined by 3 points.

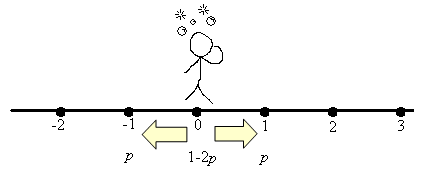

- If two scientists cooperate to get f(i) and f(j), the amount of information they have on the code is still zilch since for every value of f(0), there is a unique solution for f.

Step 3 can be performed by the Lagrange interpolation formula if one so desires: to interpolate (i, f(i)), (j, f(j)) and (k, f(k)), compute:

which is well-defined if i, j and k are distinct. Or we can solve simultaneous equations: e.g. to solve for f(1) = 2, f(2) = 3, f(3) = 9 for mod p=11, we have:

so the secret code is 6.

In the general case, say, you wish to design a secret sharing system whereby any (n+1) scientists can collaborate to obtain the code, and any n scientists cannot figure out anything about the code. To that end, a polynomial of degree n is required. Once again, Lagrange’s interpolation formula allows us to recover the entire polynomial given (n+1) distinct points, and hence the secret code.