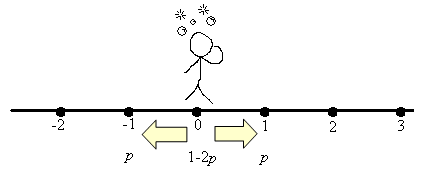

Consider discrete points on the real line, indexed by the integers … -3, -2, -1, 0, 1, 2, … . A drunken man starts at position 0 and time 0. At each time step, he may move to the left or right (each with probability p<1/2), or stay still (with probability 1-2p).

This goes on and on, and we wish to find the probability distribution of his location at time t, i.e. for each m what’s the probability u(m, t) that he’s found at position m during time t? Clearly, for each t, since the man must be found somewhere.

To solve it exactly, one can use generating functions. Indeed, the recurrence relation in time gives:

(*)

for each integer m, non-negative integer t. So if we consider the power series

then the above recurrence relation in u(m, t) can be expressed succinctly as a recurrence relation in Pt(x):

with . This expression has certain advantages in computation:

Example 1

Let x=1: this gives . But we already know this since Pt(1) represents the sum of all u(m, t) across m, i.e. it equals 1.

Example 2

Differentiate to obtain:

Substitute x=1 to obtain . Since

, we also have

for all t. Again, we already know this since

represents the expected position of our friend, which is always 0 since he’s just as inclined to go left as he is to go right. In probability notation, we have

, where Xt is the random variable for our friend’s location at time t.

Example 3

Differentiate yet again to obtain:

Substitute x=1 to obtain . Since

we have

. But

so from we can calculate the variance via

Example 4

Suppose the drunken man’s house is at x=5. What’s the value of at time t?

Answer : let’s expand

.

Conclusion

As the reader can guess, successive differentiation gives us the higher moments for various n. In fact, since

we can even consider relations across wider time steps.

Notice that we didn’t have to start with a fixed starting position for the drunken man. In particular, we could have any sequence of non-negative real u(m, 0), as long as the sum is 1. However, care would have to be taken to ensure that the higher moments are well-defined. E.g. if the probability distribution starts with

for m>0 (u(m, 0) = 0 if m ≤ 0),

then the expected value is undefined since which diverges since it is the renowned harmonic series. Roughly speaking, as m → ±∞, u(m, 0) should approach 0 exponentially fast in order for the higher moments to make sense.

Higher-Dimensional Case

Let’s assume now that our drunken friend can walk in a two-dimensional plane. So the probability of him at location (m, n) at time t is denoted u(m, n, t). At each time step, our friend can walk a unit distance in any of the four standard directions: north, south, east or west, each with probability p. The probability that he remains still for one time step is 1-4p.

The recurrence relation in t is easy to write:

u(m, n, t+1) = p(u(m-1, n, t) + u(m+1, n, t) + u(m, n-1, t) + u(m, n+1, t)) + (1-4p)u(m, n, t).

Proceeding as before, let’s define a two-parameter power series:

The above recurrence relation then translates to:

Let the random variable denoting his location at time t be (Xt, Yt). In order to extract useful information, we’ll need to do partial differentiation. For convenience, we’ll denote .

The second derivatives are:

Substituting x=1, y=1 gives the following useful values:

In the next article, we’ll consider the limiting case, where the space and time intervals approach zero, and look at the corresponding differential equations.