Following the earlier article on tensor products of vector spaces, we will now look at tensor products of modules over a ring R, not necessarily commutative. It turns out we have to distinguish between left and right modules now. Indeed recall the isomorphism from earlier: for vector spaces. If we replace V and W by left R-modules, then V* becomes a right module, so this hints that we should consider a tensor product between a right module and a left one.

Definition. Let M be a right R-module, N a left R-module and X an abelian group. A bilinear map of modules is a map

such that

- when we fix n∈N, B(-, N): M→X is additive;

- when we fix m∈M, B(m, -): N→X is additive;

- B(mr, n) = B(m, rn) for any r∈R, m∈M, n∈N.

The tensor product of M and N, denoted

is an abelian group together with a bilinear map

such that the following universal property holds:

- for any bilinear map

there is a unique additive map

such that

As before, the element

for any

is called a pure tensor.

The universal property again guarantees that the tensor product is unique if it exists.

Proof of Existence

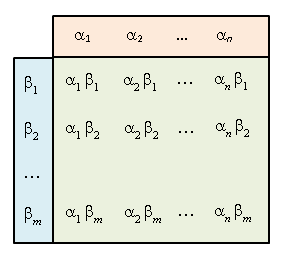

The proof is identical to earlier. Let T be the free abelian group with basis Take the subgroup U generated by elements of the form:

for . Now our desired group is T/U, and

is given by

so m⊗n is the image of

in T/U. ♦

Note

A bilinear map M × N → X corresponds to a homomorphism of right modules [ Recall that since N is a left R-module, Hom(N, X) becomes a right R-module. ] Hence, the above universal property can also be written as:

Often, we would like the tensor product to be a module instead of merely an abelian group:

Proposition. Let R, S be rings. If M is an (R, S)-bimodule and N is a left S-module, then

is a left R-module satisfying

The bilinear map

is also R-linear in M, i.e.

Proof

By definition is an abelian group. For each r∈R, consider the map

The map is R-bilinear (e.g. fr(ms, n) = (r(ms))⊗n = (rm)s⊗n = rm⊗sn = fr(m, sn)). So it induces taking

One now checks that this turns

into a left R-module such that

is R-linear in M. ♦

Properties.

- For any left R-module M,

as left R-modules.

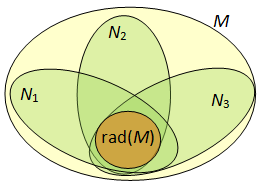

- For any right R-module M, (R, S)-bimodule N, and left S-module P, we have

- For any right R-module M, and left R-modules

, we have

Sketch of Proof.

We’ll briefly sketch the proof of the second claim. Fixing m∈M, the map taking

is bilinear over S, so it induces a group homomorphism

which is easily checked to be left R-linear. Check that this is right R-linear in r, so we obtain a group homomorphism

taking (m⊗n)⊗p to m⊗(n⊗p). ♦

Base Extension

Suppose S is an R-algebra and M a left R-module. Since S is an (S, R)-bimodule, the tensor product is now a left S-module. This is called the base extension of M. Since

we have:

Proposition. Base extension commutes, i.e. if T is an S-algebra, then

as T-modules.

Base extension satisfies the following universal property.

Universal Property of Base Extension. The canonical map

taking

is R-linear.

For any left S-module N and R-module homomorphism

there is a unique S-module homomorphism

such that

Thus for any S-module N:

Proof

The first statement is obvious. For the second, let us prove existence of f. The map mapping

is R-bilinear so it induces

satisfying

This is S-linear since

and it satisfies Uniqueness follows from the fact that M generates MS as an S-module.

Example

Suppose R = R, the real field and V is the space of polynomials with real coefficients and degree ≤ 2. Then is naturally identified with the space of polynomials with complex coefficients and degree ≤ 2.

For Group Representations

Tensor products are useful in group representations in two different ways. First, suppose V and W are K[G]-modules for a field K and finite group G. Then (note: the tensor product over K, not the group ring!) becomes a K[G]-module as well. Indeed, each g induces isomorphisms V→V and W→W and thus a bilinear map V×W→V⊗W taking (v, w) to gv⊗gw. This in turn gives us a linear map V⊗W → V⊗W taking v⊗w to gv⊗gw. [ It is an isomorphism since g-1 induces the inverse. ]

Another use of tensor product is via the induced representation. If H ⊆ G is a subgroup and V is a K[H]-module, then one can define: to obtain a representation of G. The universal property of base extension gives us: for any K[G]-module W,

This is the Frobenius reciprocity theorem in representation theory and is often written as:

Tensor Product is Right Exact

We have the following

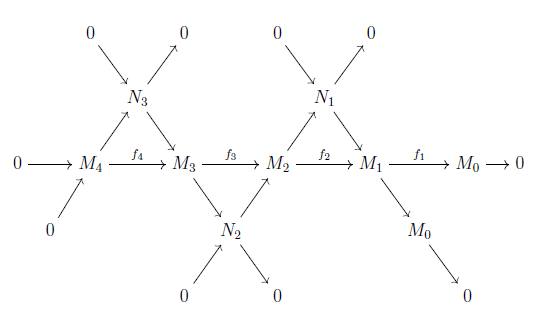

Theorem. If

is an exact sequence of left R-modules, then for any right R-module M, we get an exact sequence of abelian groups:

Proof

We use the property from above:

Now, for any abelian group X, since is exact by condition, the following is exact since Hom is left-exact:

Again since Hom is left-exact, we have:

which is precisely the same as saying:

is exact for all X. Thus, is exact. ♦

Example

Let I be a two-sided ideal of R, so R/I becomes an R-algebra via the canonical map R→R/I. We claim that for any left R-module M, base extension gives

Indeed, consider the map taking (r+I, m) to rm+IM. This map is well-defined on R/I and R-bilinear so it induces a map

Conversely, take the map

where

Everything in IM maps to 0, so this factors through

It’s easy to check that both maps are mutually inverse.

Note

From our example above, it is easy to find examples where the tensor product is not left-exact. For example, consider 0 → 2Z → Z. Tensoring with Z/2 is the same as taking M to M/2M; so we obtain 0 → 2Z/4Z → Z/2Z which is not exact since the second map takes everything to 0.