Tensor products can be rather intimidating for first-timers, so we’ll start with the simplest case: that of vector spaces over a field K. Suppose V and W are finite-dimensional vector spaces over K, with bases and

respectively. Then the tensor product

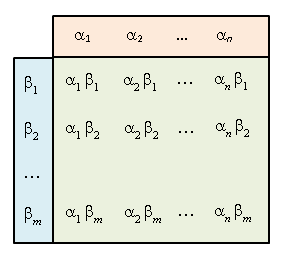

is the vector space with abstract basis

In particular, it is of dimension mn over K. Now we can “multiply” elements of V and W to obtain an element of this new space, e.g.

For example, if V is the space of polynomials in x of degree ≤ 2 and W is the space of polynomials in y of degree ≤ 3, then is the space of polynomials spanned by

where 0≤i≤2, 0≤j≤3. However, defining the tensor product with respect to a chosen basis is rather unwieldy: we’d like a definition which only depends on V and W, and not the bases we picked.

Definition. A bilinear map of vector spaces is a map

where V, W, X are vector spaces, such that

- when we fix w, B(-, w): V→X is linear;

- when we fix v, B(v, -): W→X is linear.

The tensor product of V and W, denoted

, is defined to be a vector space together with a bilinear map

such that the following universal property holds:

- for any bilinear map

, there is a unique linear map

such that

For v∈V and w∈W, the element

is called a pure tensor element.

The universal property guarantees that if the tensor product exists, then it is unique up to isomorphism. What remains is the

Proof of Existence.

Recall that if S is a basis of vector space V, then any linear function V→W uniquely corresponds to a function S→W. Thus if we let T be the (infinite-dimensional) vector space with basis:

then linear maps g : T→X correspond uniquely to functions B : V×W → X. Saying that B is bilinear is precisely the same as g factoring through the subspace U to obtain where U is the subspace generated by elements of the form:

for all v, v’ ∈ V, w, w’ ∈ W and constant c ∈ K. Hence T/U is precisely our desired vector space, with given by

And v⊗w is the image of

in T/U. ♦

Note

From the proof, it is clear that V ⊗ W is spanned by the pure tensors; in general though, not every element of V ⊗ W is a pure tensor. E.g. v⊗w + v’⊗w’ is generally not a pure tensor. However, v⊗w + v⊗w’ + v’⊗w + v’⊗w’ = (v+v’)⊗(w+w’) is a pure tensor since ψ is bilinear.

Properties of Tensor Product

We have:

Proposition. The following hold for K-vector spaces:

, where

;

, where

;

, where

;

, where

.

Proof

For the first property, the map K × V → V taking (c, v) to cv is bilinear over K, so by the universal property of tensor products, this induces f : K ⊗ V → V taking c⊗v to cv. On the other hand, let’s take the linear map g : V → K ⊗ V mapping v to 1⊗v. It remains to prove gf and fg are identity maps. Indeed: fg takes v → 1⊗v → v and gf takes c⊗v → cv → 1⊗cv = c⊗v where the equality follows from bilinearity of ⊗.

For the third property, fix v∈V. The map W×W’ → (V⊗W)⊗W’ taking (w, w’) to (v⊗w)⊗w‘ is bilinear in W and W’ so it induces taking

Next we check that the map

is bilinear so it induces a linear map taking

Similarly one defines a reverse map

taking

Since the pure tensors generate the whole space, it follows that f and g are mutually inverse.

The second and fourth properties are left to the reader. ♦

As a result of the second and fourth properties, we also have:

Corollary. For any collection

and

of vector spaces, we have:

where the LHS element

maps to

on the RHS.

In particular, if and

are bases of V and W respectively, then

so forms a basis of V⊗W. This recovers our original intuitive definition of the tensor product!

Tensor Product and Duals

Recall that the dual of a vector space V is the space V* of all linear maps V→K. It is easy to see that V* ⊕ W* is naturally isomorphic to (V ⊕ W)* and when V is finite-dimensional, V** is naturally isomorphic to V.

[ One way to visualize V** ≅ V is to imagine the bilinear map V* × V → K taking (f, v) to f(v). Fixing f we obtain a linear map V→K as expected while fixing v we obtain a linear map V*→K and this corresponds to an element of V**. ]

If V is finite-dimensional, then a basis of V gives rise to a dual basis

of V* where

or simply with the Kronecker delta symbol. The next result we would like to show is:

Proposition. Let V and W be finite-dimensional over K.

- We have

taking (f, g) to the map

- Also

taking (f, w) to the map

Proof

For the first case, fix f∈V*, g∈W*. The map taking

is bilinear so it induces a map

taking

But the assignment (f, g) → h gives rise to a map

which is bilinear so it induces

Note that

corresponds to the map

taking

To show that this is an isomorphism, let and

be bases of V and W respectively, with dual bases

and

of V* and W*. The map then takes

to the linear map

which takes

to

But this corresponds to the dual basis of

so we see that the above map φ takes a basis

to a basis: dual of

The second case is left as an exercise. ♦

Note

Here’s one convenient way to visualize the above. Suppose elements of V comprise of column vectors. Then V* is the space of row vectors, and evaluating V* × V → K corresponds to multiplying a row vector by column vector, thus giving a scalar. So V*⊕W* ≅ (V⊕W)* follows quite easily: indeed, the LHS concatenates two spaces of row vectors, while the RHS concatenates two spaces of column vectors then turns it into a space of row vectors.

The tensor product is a little trickier: for V and W we take column vectors with entries and

respectively. Then we form the column vector with mn entries

This lets us see why V*⊗W* ≅ (V⊗W)*: in both cases we get a row vector with mn entries. Finally, to obtain V* ⊗W we take row vectors

for elements of V* and column vectors

for those of W, and the these multiply to give us an m × n matrix, which represents linear maps V→W:

Question

Consider the map V* × V → K which takes (f, v) to f(v). This is bilinear so it induces a linear map f : V*⊗V → K. On the other hand, V*⊗V is naturally isomorphic to End(V), the space of K-linear maps V→V. If we represent elements of End(V) as square matrices, what does f correspond to?

[ Answer: the trace of the matrix. ]

Tensor Algebra

Given a vector space V, let us consider n consecutive tensors:

and let T(V) be the direct sum This gives an associative algebra over K by extending the bilinear map

to the entire space T(V) × T(V) → T(V). Note that it is not commutative in general. For example, suppose V has a basis {x, y, z}. Then

has basis

, where we have shortened the notation

etc.

has basis

, with 27 elements.

- Multiplying

gives

The algebra T(V), called the tensor algebra of V, satisfies the following universal property.

Theorem. The natural map ψ : V → T(V) is a linear map such that:

- for any associative K-algebra A, and K-linear map φ: V → A, there is a unique K-algebra homomorphism f: T(V) → A such that φ = fψ.

Thus,

However, often we would like multiplication to be commutative (e.g. when dealing with polynomials) and we’ll use the symmetric tensor algebra instead. Or we would like multiplication to be anti-commutative, i.e. xy = –yx (e.g. when dealing with differential forms) and we’ll use the exterior tensor algebra instead. We will say more about these when the need arises.