[ Warning: this is primarily an expository article, so the proofs are not airtight, but they should be sufficiently convincing. ]

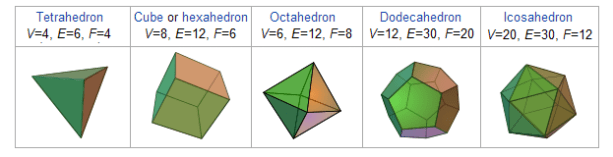

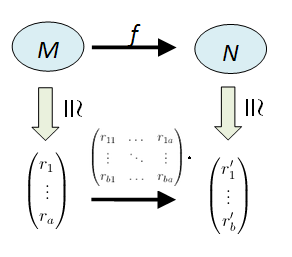

The five platonic solids were well-known among the ancient Greeks (V, E, F denote the number of vertices, edges and faces respectively):

[ Images edited from wikipedia.org. ]

In all cases, we have V–E+F=2. In fact, the same equality holds for any polyhedron which can be “deformed” into the surface of a ball. For example, if we have a pyramid with an n-gon as a base, then V=n+1, E=2n, F=n+1 which gives V–E+F=2. Or, we can glue a pyramid with a square base to a cube (assuming the side lengths match), and obtain V=9, E=16, F=9. Let’s state and prove this result.

Theorem 1. A convex polyhedron whose surface comprises of V vertices, E edges and F faces satisfies V–E+F=2.

Proof

Being convex, the polyhedron can be enclosed in a sphere and its surface projected bijectively to the surface of the sphere. This gives a partition of the sphere’s surface into polygons (called a cellular decomposition). For any two cellular decompositions P and P’, we say that P’ is a refinement of P if all vertices and edges of P are also in P’.

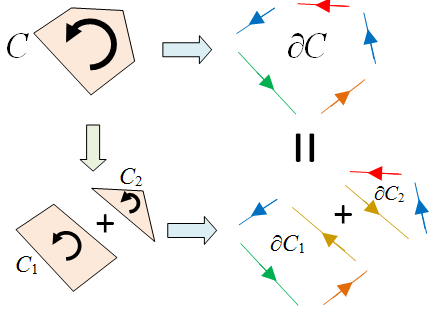

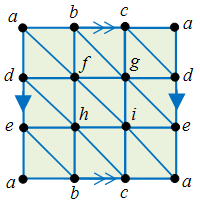

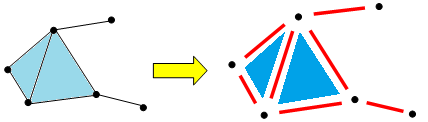

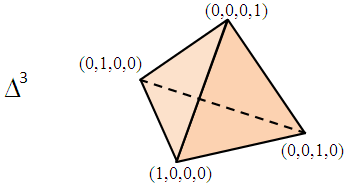

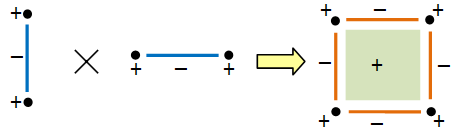

Since any two cellular decompositions have a common refinement, it suffices to show that if P’ is a refinement of P, then they have the same V–E+F. Now any refinement may be obtained from a sequence of steps, each of which is one of the two following types:

The left step changes (V, E, F) to (V+1, E+1, F) since it adds a vertex and an edge without introducing any new face. The right step changes (V, E, F) to (V, E+1, F+1). In both cases, there is no change to V–E+F. Hence this value is constant across all decompositions of the sphere and picking any single example gives V–E+F=2. ♦

The above proof, while simple, has a few hidden pitfalls for the unwary. For more details, the interested reader may refer to Imre Lakatos’ book “Proofs and Refutations” for a rather in-depth look at the underlying issues as well as their historical relevance.

The simple V–E+F formula has some interesting applications.

Example 1

Let’s prove that there are at most 5 platonic solids. Suppose we have a polyhedron with F faces, each of which is an n-gon with m edges meeting at each vertex. Then E = Fn/2 and V = 2E/m. The formula V–E+F = 2 gives

Since m, n ≥ 3, it’s easy to see that this inequality only holds for (m, n) = (3, 3), (3, 4), (3, 5), (4, 3), (5, 3), after which one can show that each case has at most one platonic solid.

Example 2

The utility graph cannot be drawn on the sphere (and hence on the plane) without intersecting edges. Indeed, if it could, we would have V=6, E=9, and so F = 2-V+E = 5. But since the graph has no triangles, each face has at least 4 edges and this gives at least 5×4/2 = 10 edges, which is a contradiction.

Exercise. Prove that the complete graph with 5 vertices cannot be drawn on the sphere without intersecting edges. Read up on planar graphs.

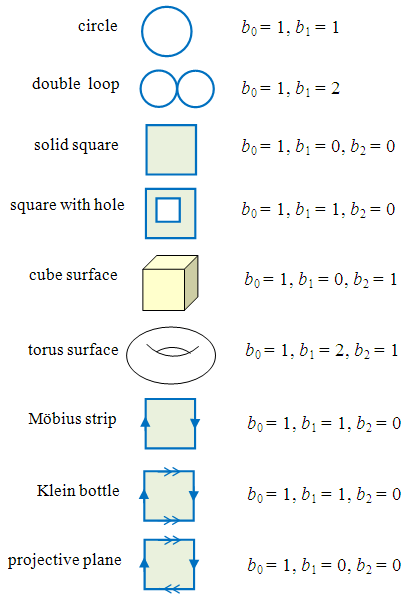

Other Surfaces

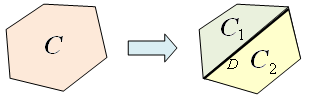

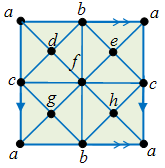

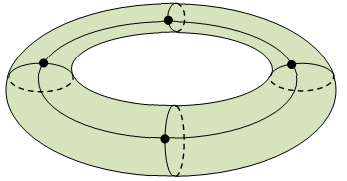

A closer look at the proof of thereom 1 indicates that V–E+F is constant for any cellular decomposition of a suitably nice surface. For example, on the surface of a torus (doughnut), the following decomposition gives – after unfolding – four faces of rectangles.

Hence V=4, E=8, F=4, and V–E+F=0. This suggests that V–E+F is an indication of the global topological property of the shape. We will call this the Euler characteristic of the surface. If we partition the surface of a two-holed doughnut, we find that its Euler characteristic is -2, and so on. This suggests the following.

Proposition 2.

The Euler characteristic of the surface of a g-holed torus is 2-2g.

We will leave the proof to the reader since it is a matter of straightforward computation.

In fact, we don’t have to restrict ourselves to smooth surfaces (the formal terminology is called closed manifolds); let’s look at some surfaces with edges.

Example 3

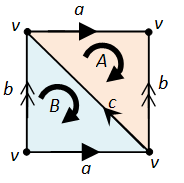

For a square, we have V=4, E=4, F=1, so the Euler characteristic = 1. Or, we can partition it into two triangles and get V=4, E=5, F=2.

Example 4

Consider the Möbius strip which is obtained by gluing a pair of opposite sides of a square by flipping over one edge. By dividing the square into two triangles, we get V=2 (since A=D, B=C), E=4 (since AC=DB) and F=2, so the Euler characteristic is 0.

Example 5

The Klein bottle is obtained by gluing the two opposite sides of the Möbius strip together, i.e. AB to CD (preserving the direction). This gives V=1 (since A=B=C=D), E=3 and F=2 so the Euler characteristic is 0.

Example 6

The projective plane is obtained by gluing the oppposite edges of a square in opposite directions, as follows.

This gives V=2 (since the opposite vertices of the square are glued together), E=2, F=1, and the Euler characteristic is 1.

Example 7

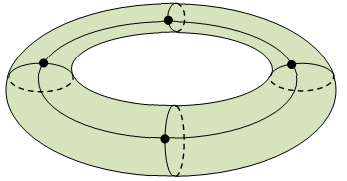

In fact, you don’t even need the surface to be connected. Indeed, it’s clear that if X is the topological disjoint union of M and N, and χ(X) denotes the Euler characteristic of X, then

More generally, we have the following result:

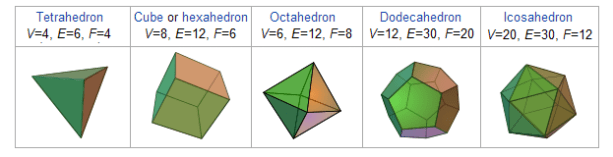

Principle of Inclusion and Exclusion. Suppose X is the union of M and N. Then  .

.

Proof

Find a cellular decomposition of M and of N, then form a decomposition of X which is a refinement of both. The result then follows easily by counting the number of times each vertex/edge/face appears in M and N. ♦

Exercise

- Draw the utility graph on the surface T of a torus such that the edges do not intersect. [Solution.]

- Do the same with the complete graph on 5 vertices.

- Find the largest n for which we can embed the complete graph on n vertices on T.

- Read up on toroidal graphs, or more generally, topological graph theory.

Other Dimensions

The Euler characteristic can be generalised to objects of arbitrary dimensions. For one dimensional objects, they can be represented by graphs. E.g. the nuclear disarmament symbol can be represented by a graph with V=5 vertices and E=8 edges so the Euler characteristic is now χ = V–E = -3.

Graphically, χ = 1-c where c is the number of “holes” in the diagram. It’s easy to show that the Euler characteristic χ = V–E for 1-dimensional objects is well-defined, i.e. independent of the graph representation we pick. Indeed, the proof is identical to before (that of theorem 1), except that in this case we can only add a vertex to an edge, since connecting two vertices completely changes the object.

Thus, for a general geometric object, we can add a vertex to break an edge into two, or an edge to break a face into two, or a face to divide a block into two, … , as illustrated by the following (B denotes the number of 3-dimensional blocks):

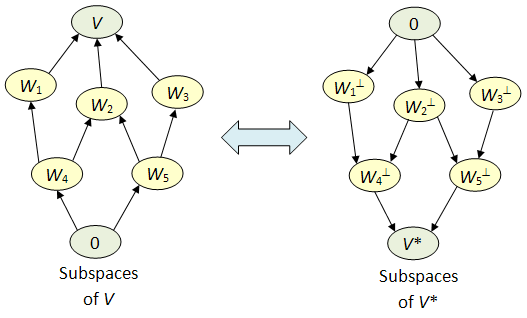

This shows that for a 3-dimensional object, its Euler characteristic χ = V–E+F–B is constant. Clearly we can generalise this to arbitrarily many dimensions: if a cellular decomposition of M comprises of  cells of dimension i, then its Euler characteristic

cells of dimension i, then its Euler characteristic

is independent of the decomposition.

Example 8

Consider a solid cube. We already know that the surface of a cube satisfies V–E+F=2. Hence, the solid cube has χ = 2-1 = 1.

Example 9

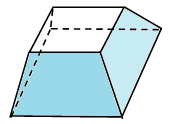

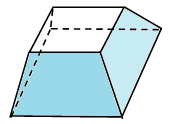

Now consider a solid cube with a small cubical hole at the centre. We could partition this solid as a union of 6 pieces of the form:

which gives us B=6, F=24, E=32, V=16 (after some careful counting!) and so χ=2.

Or, we could also use the principle of inclusion and exclusion (whose proof clearly generalises to any geometric shapes). If M is the above cube with a hole, and N is the small cube at the centre, then their union is the large cube and their intersection is the surface of a cube, so we have:

which we already know is 2.

Example 10

Consider the case of dimension 0, where we have a discrete set of points. The Euler characteristic is then the cardinality of this set (i.e. number of elements in it).

Simplicial Complexes

Let us now define in a more precise manner what objects we’re looking at. Heuristically, we start with a set S of points. A simplicial complex is then represented by a collection C of subsets of S such that if  , then every subset of T also lies in C.

, then every subset of T also lies in C.

For example, if S = {1, 2, 3, 4} and C = { Ø, {1}, {2}, {3}, {4} {1,2}, {2,3}, {1,3}, {2,4}, {1,2,3} }, then the corresponding simplicial complex is:

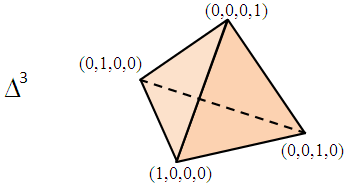

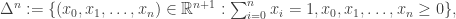

The formal topological definition is as follows: the n-dimensional simplex is the set

or its affine transform. For example, here’s a 3-dimensional simplex.

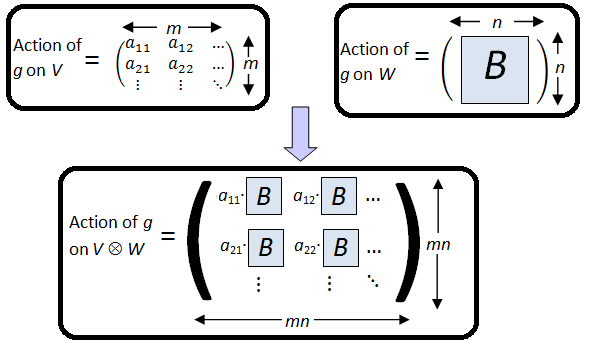

The boundary of  comprises of (n+1) simplices of dimension (n-1), each corresponding to an 0 ≤ i ≤ n, for which we take the set of

comprises of (n+1) simplices of dimension (n-1), each corresponding to an 0 ≤ i ≤ n, for which we take the set of  satisfying

satisfying  Now take each element

Now take each element  and form the corresponding simplex

and form the corresponding simplex

Now the simplicial complex for Σ is the topological space which is the subspace  In other words, the objects we’re looking are topological spaces which are homeomorphic to a simplicial complex. Note that even though the construction of a simplicial complex only uses line segments, triangles, tetrahedrons, and their higher-dimensional counterparts, its cellular decomposition may use more general shapes such as quadrilaterals, pentagons, cubes etc.

In other words, the objects we’re looking are topological spaces which are homeomorphic to a simplicial complex. Note that even though the construction of a simplicial complex only uses line segments, triangles, tetrahedrons, and their higher-dimensional counterparts, its cellular decomposition may use more general shapes such as quadrilaterals, pentagons, cubes etc.

Note: simplicial complexes are somewhat restrictive. A more general theory involves CW-complexes. The differences are subtle, and while CW-complexes may define some structures which are not homeomorphic to any simplicial complex (see here for some anomalous examples), the two theories are equivalent at the level of homotopic equivalence, which we will define in the next article.

Multiplicativity of χ

Finally, we have the following result.

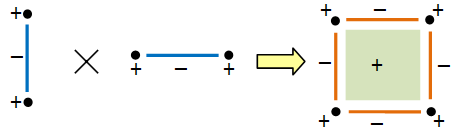

Theorem. If M and N are geometric objects, we have χ(M × N) = χ(M) × χ(N).

Proof

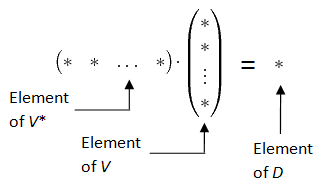

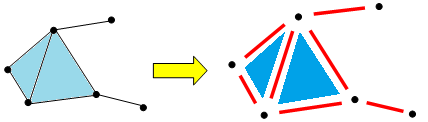

First, note that each cellular decomposition of a geometric object gives a disjoint union of the underlying set of points:

Write  and

and  as disjoint unions in this way (some of the Mi‘s or Nj‘s may have the same dimension). Then their Euler characteristics are given by:

as disjoint unions in this way (some of the Mi‘s or Nj‘s may have the same dimension). Then their Euler characteristics are given by:

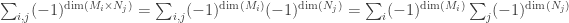

Now  and

and  so the Euler characteristic of M × N is:

so the Euler characteristic of M × N is:

which is χ(M)χ(N). ♦

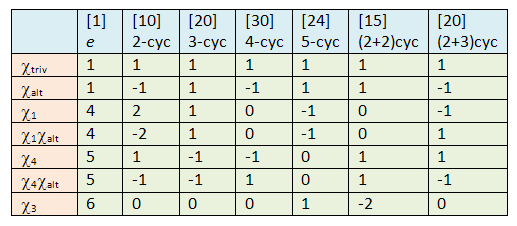

Here’s a graphical representation of the above proof.

,

for any simple K[G]-module V. Thus, their characters actually form an orthonormal set over K. The next theorem we want to show is:

, where V runs through all simple K[G]-modules, forms an orthonormal basis of the space of class functions.

Let

for any g in G. Thus α commutes with every element of K[G]. If V is a simple K[G]-module, let m : V → V be the multiplication-by-α map; since α commutes with every element of K[G], m is a K[G]-linear map. From above, we know that EndK[G](V) = K, so m is a scalar map represented by λ ∈ K.

runs through all characters of simple modules and g runs through conjugancy classes, we obtain a k × k tables for the values

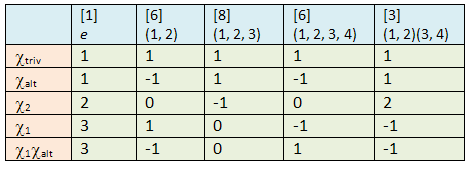

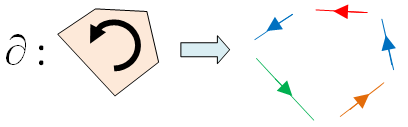

Here are the character tables for S4 and S5:

and

are orthogonal:

the last character has dimension 2. The other entries are easy to compute:

Is W simple?

where V is the simple C[G]-module with character χ = (2, -2, 0, 0, 0) above. Thus if W is not simple, we must have

for some R[G]-module V, such that dimRV = 2.

so

and so ρ(g) has eigenvalues ±√-1. From the character table, its trace is zero so the eigenvalues are +√-1 and -√-1.