Throughout this article, a general ring is denoted R while a division ring is denoted D.

Dimension of a Vector Space

First, let’s consider the dimension of a vector space V over D, denoted dim(V). If W is a subspace of V, we proved earlier that any basis of W can be extended to give a basis of V, thus dim(W) ≤ dim(V).

Furthermore, we claim that if is a basis of the quotient space V/W, then the vi‘s, together with a basis

of W, form a basis of V:

- If

for some

, its image in V/W gives

and thus each

is zero. This gives

; since

forms a basis of W, each

This proves that

is linearly independent.

- Let

. Its image v+W in V/W can be written as a linear combination

for some

Hence

and can be written as a linear combination of

So v can be written as a linear combination of

Conclusion: dim(W) + dim(V/W) = dim(V). Now if f : V → W is any homomorphism of vector spaces, the first isomorphism theorem tells us that V/ker(f) is isomorphic to im(f). Hence, dim(V) = dim(ker(f)) + dim(im(f)).

If V is finite-dimensional and dim(V) = dim(W), then:

- (f is injective) iff (ker(f) = 0) iff (dim(ker(f)) = 0) iff (dim(im(f)) = dim(V)) iff (dim(im(f)) = dim(W)) iff (im(f) = W) iff (f is surjective).

Thus, (f is injective) iff (f is surjective) iff (f is an isomorphism).

For infinite-dimensional V and W, take the free vector spaces and let f : V → W take the tuple

Then f is injective but not surjective.

Over a general ring, even if M and N are free modules, the kernel and image of f : M → N may not be free. This follows from the fact that a submodule of a free module is not free in general, as we saw earlier. Hence it doesn’t make sense to talk about dim(ker(f)) and dim(im(f)) for such cases.

In a Nutshell. The main results are:

- for a D-linear map f : V → W, dim(V) = dim(ker(f)) + dim(im(f));

- if dim(V) = dim(W), then f is injective iff it is surjective.

Matrix Algebra

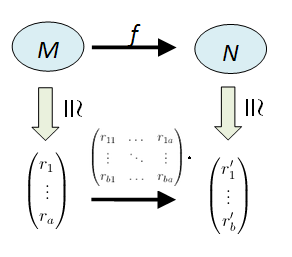

Recall that an R-module M is free if and only if it has a basis , in which case we can identify

via

Let’s restrict ourselves to the case of finite free modules, i.e. modules with finite bases. If

and

the group of homomorphisms is identified with

in terms of b × a matrices in R.

Let’s make this identification a bit more explicit. Pick a basis of M and

of N. We have:

and

A module homomorphism f : M → N is expressed as a matrix as follows:

Example 1

Take R = R, the field of real numbers and and

where x is an indeterminate here. The map f : M → N given by f(p(x)) = dp/dx is easily checked to be R-linear.

Pick basis {1, x x2} of M and {1, x} of N. Since f(1) = 0, f(x) = 1 and f(x2) = 2x, the resulting f takes Hence, the matrix corresponding to these bases is

On the other hand, if we pick basis {1+x, –x, 1+x2} of M and basis {1+x, 1+2x} of N, then

;

;

which gives the matrix representation

Example 2

Let M = {a + b√2 : a, b integers} which is a Z-module. Take f : M → M which takes z to (3-√2)z. It’s clear that f is a homomorphism of additive groups and hence Z-linear. Since the domain and codomain modules are identical (M), let’s pick a single basis.

If we pick {1, √2}, then

;

thus giving the matrix representation Replacing the basis by {-1, 1+√2} would give us:

Thus, the matrix representation for f : V → W depends on our choice of bases for V and W. If V = W, then it’s often convenient to pick the same basis.

Dual Module

We saw earlier that as an R-module isomorphism. What about Hom(M, R) then?

Definition. The dual module of left-module M is defined to be

This is a right R-module, via the following right action:

- if

and

, then the resulting

takes

.

From the universal property of direct sums and products, we see that:

Let’s check that we get a right-module structure on M*: indeed, takes m to

which is the image of

acting on m.

The module is called the dual because it’s a right module instead of a left one. Note that if N were a right-module, the resulting space Hom(N, R) of all right-module homomorphisms would give us a left module

It’s not true in general that

but it holds for finite-dimensional vector spaces over a division ring.

Theorem. If V is a finite-dimensional vector space over division ring D, then

Proof.

Consider the map which takes (f, v) to f(v). Fixing f, we get a map

which is a left-module homomorphism. Fixing v, we get a right-module homomorphism

since (f·r) corresponds to the map

by definition. This gives a left-module homomorphism

Since V is finite dimensional, it suffices to show . But if

, we can extend {v} to a basis of V. Define a linear map f : V → D which takes v to 1 and all other basis elements to 0. Then

so

This shows that

is injective and thus an isomorphism. ♦

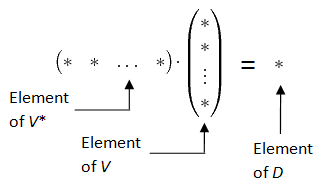

One way to visualise the duality is via this diagram:

Exercise

It’s tempting to define a module structure on Hom(M, R) via What’s wrong with this definition? [ Answer: the resulting f·r : M → R is not a left-module homomorphism. ]

Dual Basis

Suppose is a basis of V. Let

(i = 1, …, n) be linear maps defined as follows:

Each is well-defined by the universal property of the free module V. Using the Kronecker delta function, we can just write

This is called the dual basis for

[ Why is this a basis, you might ask? We know that dim(V*) = dim(V) = n, so it suffices to check that is linearly independent. For that, we write

for some

(recall that V* is a right module). Then for each j = 1, …, n, we have

and we’re done. ]

Now if and

we can write

and

for some

Then

which is the product between a row vector & a column vector.

One thus gets a natural inner product between a vector space and its dual. Recall that in an Euclidean vector space , there’s a natural inner product given by the usual dot product which is inherent in the geometry of the space. However, for generic vector spaces, it’s hard to find a natural inner product. E.g. what would one be for the space of all polynomials of degree at most 2? Thus, the dual space provides a “cheap” and natural way to get an inner product.

Example

Consider the space over the reals R=R. Examples of elements of V* are:

which takes

;

which takes

;

which takes

.

It’s easy to check that these three elements of V* are linearly independent and hence form a basis. Note: in this case, the base ring is a field so right modules are also left, i.e. V* and V are isomorphic as abstract vector spaces! However, there’s no “natural” isomorphism between them since in order to establish an isomorphism, one needs to pick a basis of V, a basis of V* and map the corresponding elements to each other. On the other hand, the isomorphism between V** and V is completely natural.

Exercise.

[ All vector spaces in this exercise are of finite dimension. ]

Let be a basis of V and

be its dual basis for V*. Denote the dual basis of

by

in V**. Prove that under the isomorphism

, we have

Let be a basis of V and

be a basis of W. If T : V → W is a linear map, then the matrix representation of T with respect to bases

is denoted M.

- Prove that the map T* : W* → V* which takes g : W → D to the composition g º T : V → D is a linear map of right modules.

- Let

be the dual basis of

for V* and

be the dual basis of

for W*. Prove that the matrix representation of T* with respect to bases

is the transpose of M.

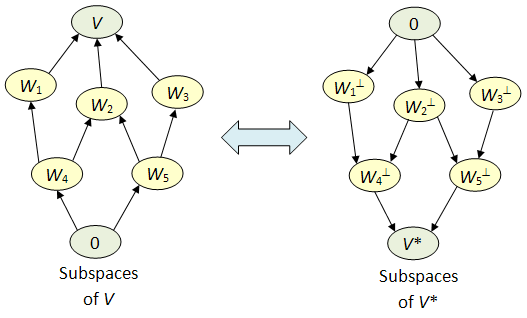

More on Duality

Let V be a finite-dimensional vector space over D and V* be its dual. We claim that there’s a 1-1 correspondence between subspaces of V and those of V*, which is inclusion-reversing. Let’s describe this:

- if

is a subspace, define

- if

is a subspace, define

The following preliminary results are easy to prove.

Proposition.

is a subspace of V*;

is a subspace of V;

- if

, then

;

- if

, then

;

and

.

We’ll skip the proof, though we’ll note that the above result in fact holds for any subsets and

. This observation also helps us to remember the direction of inclusion for

since in this general case,

is the subspace of V generated by W.

The main thing we want to prove is the following:

Theorem. If

is a subspace, then

. Likewise if

is a subspace, then

Proof.

Pick a basis of W and extend it to a basis

of V, where dim(W) = k and dim(V) = n. Let

be the dual basis.

If write

where each

Since v is outside W,

for some j>k. This gives

and

since j>k. Hence

and we have

The case for X is obtained by replacing V with V* and identifying . ♦

Thus we get the following correspondence:

Furthermore, the dimensions “match”. E.g. suppose dim(V) = n, so dim(V*) = n. Then we claim that for any subspace W of V of dimension k,

;

naturally.

Since dim(W*) = dim(W) = k, the first statement follows from the second. From results above, the inclusion map W → V induces a map of the dual spaces V* → W*. The kernel of this map is precisely the set of all such that f(w) = 0 for all w in W, which is exactly

This proves our claim. ♦