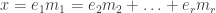

Continuing our discussion of modular representation theory, we will now discuss block theory. Previously, we saw that in any ring R, there is at most one way to write  where

where  is a set of orthogonal and centrally primitive idempotents. If such an expression exists, the

is a set of orthogonal and centrally primitive idempotents. If such an expression exists, the  are called block idempotents of R. For example, block idempotents exist when R is artinian.

are called block idempotents of R. For example, block idempotents exist when R is artinian.

We need the following refinement:

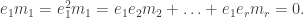

Lemma. Let  be block idempotents of R. Suppose

be block idempotents of R. Suppose  where the

where the  are orthogonal central idempotents. Then there exists a unique map

are orthogonal central idempotents. Then there exists a unique map  such that:

such that:

Note: if  the sum is zero

the sum is zero

Thus after a suitable renumbering of terms, we have:

Proof

For each  we have

we have  , where

, where  are orthogonal central idempotents. Since

are orthogonal central idempotents. Since  is centrally primitive, we must have

is centrally primitive, we must have  for some unique j and

for some unique j and  for all k≠j. The map

for all k≠j. The map  then gives

then gives  and

and  for all

for all  And so

And so

♦

♦

Decomposition of R-Modules

Let M be an R-module and suppose  where the

where the  are block idempotents of R. Then

are block idempotents of R. Then

- Indeed,

since each

since each

- On the other hand, if

then we have

then we have  and thus

and thus

Furthermore, since  commutes with every r∈R,

commutes with every r∈R,  is in fact an R-submodule. The central idempotent

is in fact an R-submodule. The central idempotent  acts as the identity on

acts as the identity on  and zero on

and zero on  for j≠i. One can thus imagine:

for j≠i. One can thus imagine:

where each  is an

is an  -module.

-module.

Block Idempotents of Semisimple R

Recall that a semisimple ring R is isomorphic to  for some division ring

for some division ring  where

where  is the n × n matrix ring with entries in D. Since each matrix ring is a simple ring, we immediately obtain the central idempotents:

is the n × n matrix ring with entries in D. Since each matrix ring is a simple ring, we immediately obtain the central idempotents:  for i=1,…,m, corresponds to the element whose component in

for i=1,…,m, corresponds to the element whose component in  is the identity matrix, and whose component in

is the identity matrix, and whose component in  is the zero matrix.

is the zero matrix.

As a module over itself, R is a direct sum of the spaces of column vectors, so  where

where  runs through a complete collection of simple R-modules, and the component

runs through a complete collection of simple R-modules, and the component  gives the maximal decomposition

gives the maximal decomposition  as a direct sum of ideals.

as a direct sum of ideals.

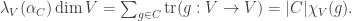

In particular, this holds for the group ring K[G]. We have:

![K[G] = \oplus V^{\dim_K V}](https://s0.wp.com/latex.php?latex=K%5BG%5D+%3D+%5Coplus+V%5E%7B%5Cdim_K+V%7D&bg=ffffff&fg=333333&s=0&c=20201002) , where the direct sum is over all simple V.

, where the direct sum is over all simple V.

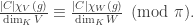

Lemma. The block idempotent for  is given by the following formula:

is given by the following formula:

where  is the character of V.

is the character of V.

Proof

Fix V; it suffices to show that  induces the identity map on

induces the identity map on  and zero on all other components. Now the coefficients of

and zero on all other components. Now the coefficients of  are constant over each conjugancy class, so

are constant over each conjugancy class, so ![e_V\in Z(K[G])](https://s0.wp.com/latex.php?latex=e_V%5Cin+Z%28K%5BG%5D%29&bg=ffffff&fg=333333&s=0&c=20201002) and

and  is K[G]-linear. If W is simple,

is K[G]-linear. If W is simple,  induces a scalar map on it, say

induces a scalar map on it, say  To compute

To compute  we take the trace:

we take the trace:

But  and so the above sum is

and so the above sum is  Thus

Thus  as desired. ♦

as desired. ♦

Block Idempotents of R[G]

Since k[G] is artinian, block idempotents are guaranteed to exist. Furthermore these can be lifted to block idempotents of R[G], which is a nice result since R[G] is not artinian.

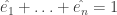

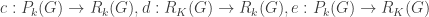

Lemma. Suppose  are orthogonal central idempotents of Z(k[G]). Then we can find orthogonal central idempotents

are orthogonal central idempotents of Z(k[G]). Then we can find orthogonal central idempotents  of R[G] such that

of R[G] such that

Proof

The proof is conceptually similar to an earlier lemma. The main step is to show:

Claim: if S is a commutative ring with ideal I such that  , then any orthogonal idempotents

, then any orthogonal idempotents  summing to 1 can be lifted to orthogonal idempotents

summing to 1 can be lifted to orthogonal idempotents  summing to 1.

summing to 1.

[ If we can show this, then idempotents ![e_i \in Z((R/\pi)[G])](https://s0.wp.com/latex.php?latex=e_i+%5Cin+Z%28%28R%2F%5Cpi%29%5BG%5D%29&bg=ffffff&fg=333333&s=0&c=20201002) can be lifted to

can be lifted to ![Z((R/\pi^2)[G]](https://s0.wp.com/latex.php?latex=Z%28%28R%2F%5Cpi%5E2%29%5BG%5D&bg=ffffff&fg=333333&s=0&c=20201002) , and in turn to

, and in turn to ![Z((R/\pi^4)[G]](https://s0.wp.com/latex.php?latex=Z%28%28R%2F%5Cpi%5E4%29%5BG%5D&bg=ffffff&fg=333333&s=0&c=20201002) etc. Since R is complete, this gives idempotents in Z(R[G]). ]

etc. Since R is complete, this gives idempotents in Z(R[G]). ]

Proof of Claim.

Pick any  such that

such that  . Thus:

. Thus:

As in the earlier proof, let  and this gives

and this gives  Also

Also  for all i≠j since it is divisible by

for all i≠j since it is divisible by  (since S is commutative). Finally, we claim that

(since S is commutative). Finally, we claim that  Indeed, from the factorisation

Indeed, from the factorisation  we obtain:

we obtain:

where the first equality follows from  and the second follows from

and the second follows from  for i≠j. Note that

for i≠j. Note that  so

so  Thus

Thus  is zero. ♦

is zero. ♦

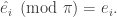

Conversely, if ![\hat{e_i}\in R[G]](https://s0.wp.com/latex.php?latex=%5Chat%7Be_i%7D%5Cin+R%5BG%5D&bg=ffffff&fg=333333&s=0&c=20201002) are orthogonal central idempotents summing to 1, then so are their images

are orthogonal central idempotents summing to 1, then so are their images ![e_i \in k[G].](https://s0.wp.com/latex.php?latex=e_i+%5Cin+k%5BG%5D.&bg=ffffff&fg=333333&s=0&c=20201002) Finally, we have:

Finally, we have:

Lemma. If ![\hat{e}, \hat{e}'\in R[G]](https://s0.wp.com/latex.php?latex=%5Chat%7Be%7D%2C+%5Chat%7Be%7D%27%5Cin+R%5BG%5D&bg=ffffff&fg=333333&s=0&c=20201002) are central idempotents with the same image in k[G], then they are equal.

are central idempotents with the same image in k[G], then they are equal.

Proof.

Let  , which is a central idempotent in πR[G]. But

, which is a central idempotent in πR[G]. But ![\hat f \in \pi^m R[G] \implies \hat f = \hat{f}^2\in \pi^{2m} R[G]](https://s0.wp.com/latex.php?latex=%5Chat+f+%5Cin+%5Cpi%5Em+R%5BG%5D+%5Cimplies+%5Chat+f+%3D+%5Chat%7Bf%7D%5E2%5Cin+%5Cpi%5E%7B2m%7D+R%5BG%5D&bg=ffffff&fg=333333&s=0&c=20201002) so we must have

so we must have  and so

and so  ♦

♦

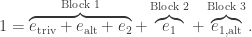

Thus, we have shown:

Summary.

There is a 1-1 correspondence between:

- orthogonal central idempotents of k[G] summing to 1, and

- orthogonal central idempotents of R[G] summing to 1.

In particular, block idempotents for k[G] lift to those for R[G]:

![1 = e_1 + e_2 + \ldots + e_r,\ (e_i \in k[G]) \ \mapsto 1 = \hat{e_1} + \hat{e_2} + \ldots + \hat{e_r},\ (\hat{e_i} \in R[G]).](https://s0.wp.com/latex.php?latex=1+%3D+e_1+%2B+e_2+%2B+%5Cldots+%2B+e_r%2C%5C+%28e_i+%5Cin+k%5BG%5D%29+%5C+%5Cmapsto+1+%3D+%5Chat%7Be_1%7D+%2B+%5Chat%7Be_2%7D+%2B+%5Cldots+%2B+%5Chat%7Be_r%7D%2C%5C+%28%5Chat%7Be_i%7D+%5Cin+R%5BG%5D%29.&bg=ffffff&fg=333333&s=0&c=20201002)

Taking each ![\hat{e_i} \in K[G]](https://s0.wp.com/latex.php?latex=%5Chat%7Be_i%7D+%5Cin+K%5BG%5D&bg=ffffff&fg=333333&s=0&c=20201002) , we can write it as a sum of the block idempotents of K[G]. Thus, we can partition the set of simple K[G]-modules as a disjoint union

, we can write it as a sum of the block idempotents of K[G]. Thus, we can partition the set of simple K[G]-modules as a disjoint union  , one for each

, one for each  , such that:

, such that:

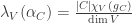

For convenience, we also denote  by

by  where χ is the character of V. This gives the formula

where χ is the character of V. This gives the formula

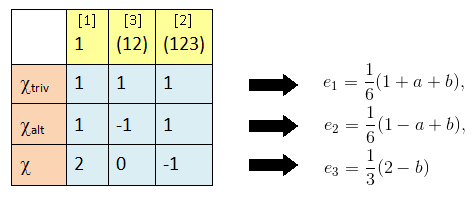

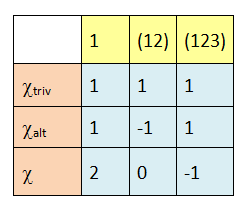

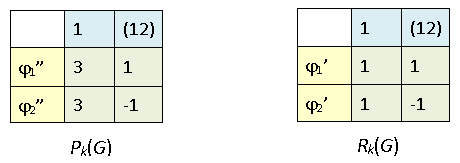

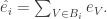

Example: S3.

Let’s compute the central idempotents for K[S3] using the above formula. Let a = (1,2) + (2,3) + (3,1) and b = (1,2,3) + (1,3,2). We recover the example at the end of the previous article:

Now let’s consider the case p=2. We have  as block idempotents of R[G] (and also of k[G], after reduction mod 2), so the blocks are {e1, e2}, {e3}. For p=3, {e1, e2, e3} all belong to the same block.

as block idempotents of R[G] (and also of k[G], after reduction mod 2), so the blocks are {e1, e2}, {e3}. For p=3, {e1, e2, e3} all belong to the same block.

If M is an indecomposable k[G]-module, then  and thus there is exactly one i for which

and thus there is exactly one i for which  , and

, and  for all j≠i. As a result, the basis elements

for all j≠i. As a result, the basis elements ![[P] \in P_k(G)](https://s0.wp.com/latex.php?latex=%5BP%5D+%5Cin+P_k%28G%29&bg=ffffff&fg=333333&s=0&c=20201002) and

and ![[M] \in R_k(G)](https://s0.wp.com/latex.php?latex=%5BM%5D+%5Cin+R_k%28G%29&bg=ffffff&fg=333333&s=0&c=20201002) can be classified into blocks, where P (resp. M) belongs to block

can be classified into blocks, where P (resp. M) belongs to block  if and only if

if and only if  (resp.

(resp.  ). Note that if P belongs to block ei, then the idempotent ei acts as the identity on eiP. The same holds for M.

). Note that if P belongs to block ei, then the idempotent ei acts as the identity on eiP. The same holds for M.

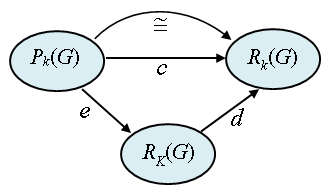

Similarly, for a simple K[G]-module V, there is a unique i for which  In summary, we can think of a block as a collection of:

In summary, we can think of a block as a collection of:

- indecomposable finitely-generated projective k[G]-modules P;

- simple k[G]-modules M;

- simple K[G]-modules V.

Lemma. Suppose basis elements ![[P]\in P_k(G), [V]\in R_K(G)](https://s0.wp.com/latex.php?latex=%5BP%5D%5Cin+P_k%28G%29%2C+%5BV%5D%5Cin+R_K%28G%29&bg=ffffff&fg=333333&s=0&c=20201002) belong to distinct blocks ei and ej, where i≠j. Then the matrix entry of

belong to distinct blocks ei and ej, where i≠j. Then the matrix entry of  corresponding to [P], [V] is zero.

corresponding to [P], [V] is zero.

Proof

Indeed, we have  and

and  If

If ![[\hat P] \in P_R(G)](https://s0.wp.com/latex.php?latex=%5B%5Chat+P%5D+%5Cin+P_R%28G%29&bg=ffffff&fg=333333&s=0&c=20201002) is the lift of [P], we have

is the lift of [P], we have  and thus

and thus  since

since  is an indecomposable R[G]-module. Hence

is an indecomposable R[G]-module. Hence  and we must have

and we must have  for any irreducible component W of

for any irreducible component W of  This shows that V cannot be a component of

This shows that V cannot be a component of  ♦

♦

Corollary. Let ![[P] \in P_k(G), [M] \in R_k(G), [V]\in R_K(G)](https://s0.wp.com/latex.php?latex=%5BP%5D+%5Cin+P_k%28G%29%2C+%5BM%5D+%5Cin+R_k%28G%29%2C+%5BV%5D%5Cin+R_K%28G%29&bg=ffffff&fg=333333&s=0&c=20201002) be basis elements.

be basis elements.

- If [M] and [V] belong to different blocks, the matrix entry of

is zero.

is zero.

- If [P] and [V] belong to different blocks, the matrix entry of

is zero.

is zero.

Proof

The first statement follows from the fact that the matrix for d is the transpose of that for e; the second statement follows from c = de. ♦

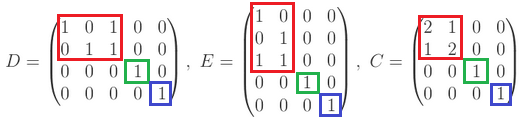

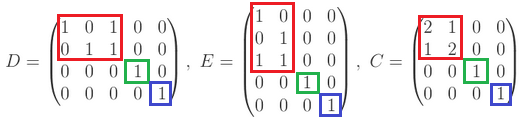

Thus, the matrices for  can be broken up as block matrices, one for each block idempotent of k[G].

can be broken up as block matrices, one for each block idempotent of k[G].

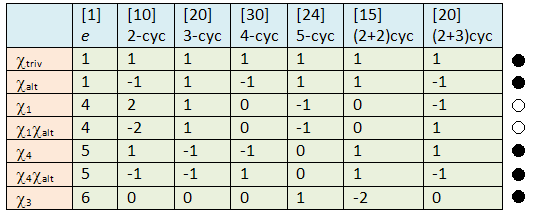

Example: S4.

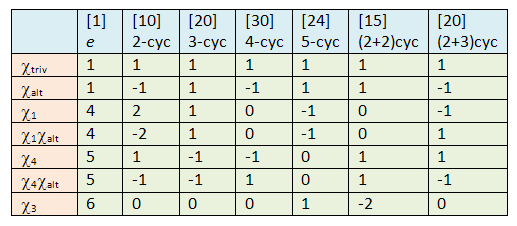

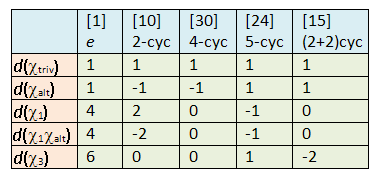

The character table of S4 gives us: letting a = (sum of 2-cycles), b = (sum of 3-cycles), c = (sum of 4-cycles), d = (sum of (2+2)-cycles), the central idempotents of K[S4] are:

For p = 2, all five simple characters belong to the same block. For p = 3, we have:

Finally, we can check when two characters lie within the same block. First, we need the following lemma:

Lemma. Let R be a commutative k-algebra of finite dimension over k and  be its block idempotents. Then any k-algebra homomorphism

be its block idempotents. Then any k-algebra homomorphism  is uniquely determined by the image of the block idempotents

is uniquely determined by the image of the block idempotents

Proof

Corresponding to the block idempotents, write  as a product of commutative k-algebras. Since

as a product of commutative k-algebras. Since  is artinian,

is artinian,  is semisimple and hence a product of matrix algebras. Since

is semisimple and hence a product of matrix algebras. Since  has no idempotent except 0 and 1,

has no idempotent except 0 and 1,  itself is a matrix algebra, i.e.

itself is a matrix algebra, i.e.  for some division ring D/k. But R is commutative, so n=1 and D is a field extension k’ of k.

for some division ring D/k. But R is commutative, so n=1 and D is a field extension k’ of k.

On the other hand, let  Since

Since  and the

and the  are orthogonal idempotents, exactly one

are orthogonal idempotents, exactly one  is a k-algebra homomorphism while the remaining

is a k-algebra homomorphism while the remaining  are zero maps for j≠i. Now

are zero maps for j≠i. Now  must factor through the nilpotent ideal

must factor through the nilpotent ideal  and so it is determined by

and so it is determined by  which is either the zero map or the identity, depending on whether

which is either the zero map or the identity, depending on whether  is 0 or 1. ♦

is 0 or 1. ♦

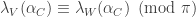

Theorem. Let V, W be simple K[G]-modules; the following are equivalent.

- V and W are in the same block.

- For any conjugancy class C⊆G and g∈C, we have:

Note

In the course of the proof, we will see that  for any simple V, so the congruence is well-defined.

for any simple V, so the congruence is well-defined.

Proof

Step 1. For each simple V, we will define a ring homomorphism ![\lambda_V : Z(K[G]) \to K.](https://s0.wp.com/latex.php?latex=%5Clambda_V+%3A+Z%28K%5BG%5D%29+%5Cto+K.&bg=ffffff&fg=333333&s=0&c=20201002)

Given any conjugancy class C⊆G, define  as a K-linear map V→V. Since

as a K-linear map V→V. Since  commutes with all g∈G, it is a K[G]-linear map V→V. But V is simple, hence such a map is a scalar; thus we get a ring homomorphism

commutes with all g∈G, it is a K[G]-linear map V→V. But V is simple, hence such a map is a scalar; thus we get a ring homomorphism ![\lambda_V : Z(K[G]) \to K.](https://s0.wp.com/latex.php?latex=%5Clambda_V+%3A+Z%28K%5BG%5D%29+%5Cto+K.&bg=ffffff&fg=333333&s=0&c=20201002)

Step 2. Show that  is the LHS of the congruence and that this lies in R.

is the LHS of the congruence and that this lies in R.

Taking the trace of  , we get:

, we get:

So  for any representative

for any representative  To prove that this lies in R, recall that we can pick an R-lattice M⊂V which is an R[G]-module. Thus

To prove that this lies in R, recall that we can pick an R-lattice M⊂V which is an R[G]-module. Thus  Picking a basis for M, we have

Picking a basis for M, we have

Step 3. Compute  where

where  is the K[G] block idempotent for W.

is the K[G] block idempotent for W.

Let us rewrite

and so

Step 4. Complete the proof.

Since  , step 3 tells us V and W belong to the same block

, step 3 tells us V and W belong to the same block  if and only if

if and only if  for all i. But this value is either 0 or 1, so it holds if and only if

for all i. But this value is either 0 or 1, so it holds if and only if

for all i.

for all i.

On the other hand, ![\lambda_V(Z(R[G])) \subseteq R](https://s0.wp.com/latex.php?latex=%5Clambda_V%28Z%28R%5BG%5D%29%29+%5Csubseteq+R&bg=ffffff&fg=333333&s=0&c=20201002) so we also obtain a ring homomorphism

so we also obtain a ring homomorphism ![\lambda_V : Z(R[G]) \to R.](https://s0.wp.com/latex.php?latex=%5Clambda_V+%3A+Z%28R%5BG%5D%29+%5Cto+R.&bg=ffffff&fg=333333&s=0&c=20201002) Reduction mod π then gives us a k-algebra homomorphism

Reduction mod π then gives us a k-algebra homomorphism ![\lambda_V' : Z(k[G]) \to k.](https://s0.wp.com/latex.php?latex=%5Clambda_V%27+%3A+Z%28k%5BG%5D%29+%5Cto+k.&bg=ffffff&fg=333333&s=0&c=20201002) By the above, V and W belong to the same block if and only if

By the above, V and W belong to the same block if and only if  for all i. Since Z(k[G]) is a commutative k-algebra of finite dimension over k, the above lemma says this holds if and only if

for all i. Since Z(k[G]) is a commutative k-algebra of finite dimension over k, the above lemma says this holds if and only if ![\lambda_V' = \lambda_W' : Z(k[G]) \to k](https://s0.wp.com/latex.php?latex=%5Clambda_V%27+%3D+%5Clambda_W%27+%3A+Z%28k%5BG%5D%29+%5Cto+k&bg=ffffff&fg=333333&s=0&c=20201002) which is equivalent to

which is equivalent to  for all C. ♦

for all C. ♦

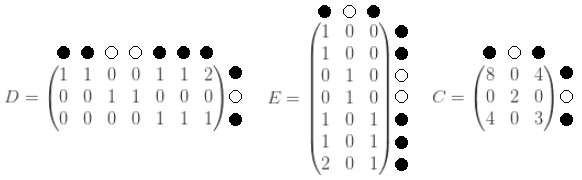

Example: S5.

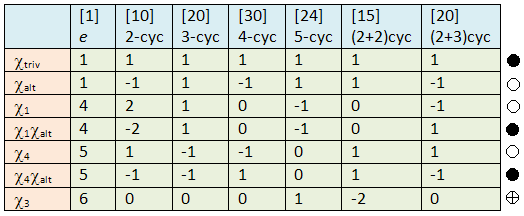

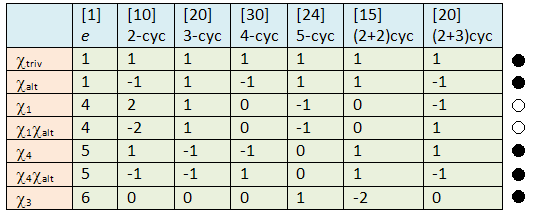

Modular 2, the blocks of the character table are labeled by the circles on the right:

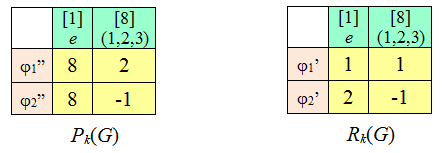

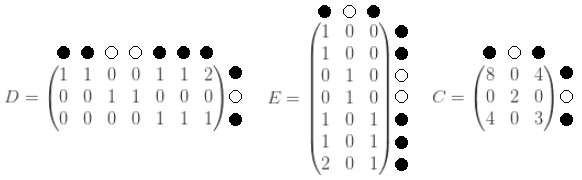

The corresponding matrices are:

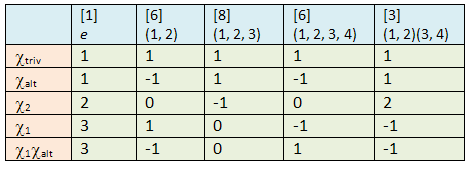

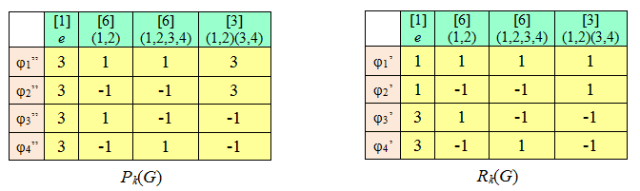

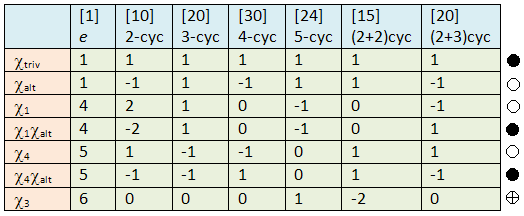

Modular 3, the table becomes:

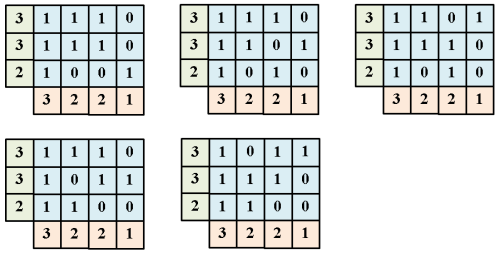

The corresponding matrices are:

to be:

, we have:

Note that while the elementary symmetric polynomials only go up to

, the complete symmetric polynomial

is defined for all

Finally, we define as before:

is any partition, we define:

as in terms of the monomial symmetric polynomials

is the number of matrices

with non-negative integer entries such that

for each j and

for each i.

and

. Multiplying

, we pick the following terms to obtain the product

by taking terms from

etc. ♦

,

. Then

since we have the following matrices:

for all partitions

of 4. Calculate the resulting 5 × 5 matrix, by ordering the partitions reverse lexicographically.

Next, the generating function for the $h_k$’s is given by:

, we obtain the following relation:

for

as a polynomial in

. E.g.

As another example, if n=3, we have

is a free commutative ring, we can define a graded ring homomorphism

is an involution, i.e.

is the identity on

that

for

For

this is obvious; suppose

. Apply

to the above recurrence relation; since

for

we have:

for

; since

we have

for all

; since

generate

we are done. ♦

; write

as an integer linear combination of the

for

Applying

, this gives

in terms of

for

In particular, we get:

-basis of

:

as a free commutative ring; the isomorphism preserves the grading, where

, where

run through all partitions of d. Using the involution

prove that

each

can be uniquely expressed as a polynomial in

For n=3, express

in terms of

In the definition of

In the definition of