Lesson 7

[ Warning: another long post ahead. One of the proofs will also require mathematical induction. ]

In this lesson, we will see how some games can be represented by numbers (which can be integers or fractions). We will also introduce the following game.

Hackenbush

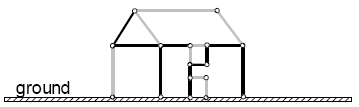

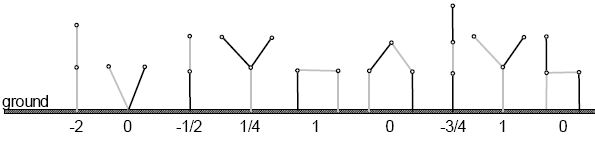

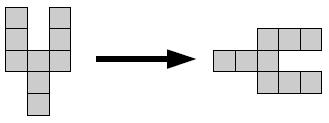

Draw a diagram with Light and daRk edges, which is attached to the ground. E.g.

The two players alternately remove an edge from the diagram. After a move, any component which is disconnected from the ground is eliminated. Furthermore, player Left can only remove Light edges while Right can only remove daRk edges. A player wins if he gets to clear the diagram. Hackenbush can also be played with bLue and Red edges.

Integers

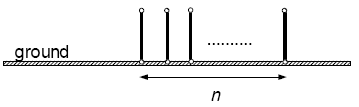

First, consider the following configuration.

It is clear that Left is the winning party. In fact, Left has n free moves available to him while Right doesn’t have any. Hence, it makes sense to label the above game by n. Indeed, we will define the following games as positive integers:

Definition. The game 1 is defined to be {0 | }. And we recursively define the games 2, 3, … as follows:

2 = 1 + 1, 3 = 2 + 1, 4 = 3 + 1, ….

On the other hand, consider the negation of 1, i.e. -1. This is obtained by flipping the colours (Light to daRk and vice versa), so we have the following:

Definition. The game -1 is defined to be { | 0}. And we recursively define the games -2, -3, … as follows:

-2 = (-1) + (-1), -3 = (-2) + (-1), -4 = (-3) + (-1), ….

So the following configuration is equal to –n.

Since -1 is defined to be the negative of 1, we know that 1 + (-1) = 0 by the results in the previous lesson. In other words, when we add and subtract the above games, we are just performing the usual addition and subtraction on integers.

What are the options available for a game which is a positive integer?

From the Hackenbush games above, it is clear that if n is a positive integer, then Left has a single possible move n → n-1 while Right has no available move. Hence, we could also have defined inductively:

1 = {0 | }, 2 = {1 | }, 3 = {2 | }, … , n = {n-1 | }.

Games With Value 1/2

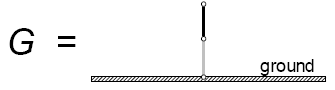

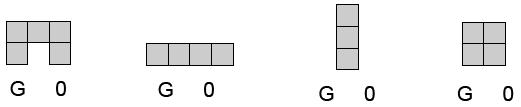

This is our first example of a fraction occurring in a game. Consider the following game G:

Looking at the options of G, we get G = {0 | 1}. It is very tempting to write this as G = 1/2. To check that this is consistent with addition, we need to verify G + G = 1, or equivalently, G + G + (-1) is a second-player win. This is an easy exercise which we’ll leave to the reader.

Definition. The game {0 | 1} is denoted by 1/2.

What about -1/2? By definition, it is the negation of 1/2, so it is {-1 | -0} = {-1 | 0}.

Warning : it is not true that 1/2 is the only game G for which G + G = 1 (see exercise 2).

Clearly, 1/2 is a win for Left, so 1/2 > 0. On the other hand, 1/2 + 1/2 = 1, so adding -1/2 on both sides gives us 0 < 1/2 = 1 + (-1/2). Adding 1/2 on both sides again, we get 1/2 < 1. We have, thus, proven:

0 < 1/2 < 1.

Before proceeding to define other fractions, we shall introduce the first method of simplifying games.

Simplification (I)

Consider a general game expressed in this form:

.

We claim that if B ≥ A then we can erase A from our list of Left options to obtain . On a heuristic level, this seems obvious since A is a “better” move for Left compared to B. But let’s prove it carefully.

Proof. We write:

and

.

We need to show that G = H, i.e. G + (-H) is a second-player win. Note that by definition of negation:

.

Now, the second player has an easy strategy as follows: whatever the first player does, he does the reverse in the other component. The only problem occurs if Left starts by taking G → A. Although Right has no corresponding move –H → –A, he can do even better: take –H → -B. The resulting game is now:

Recall from last lesson’s exercise that in such a game, if Left starts, then Right wins. But now it’s precisely Left‘s turn, so Right gets to win. Conclusion: G – H is a second-player win, which completes the proof. ♦

Note that the above simplification rule has a twin brother: If X and Y are Right‘s options in the game G, and X ≥ Y, then we can erase X from the list of Right options and the game is still equal to G.

More Fractions

The next step is to look at the game:

Writing the options of G, we get G = {0 | 1/2, 1}. By the simplification rule we just covered, we can remove 1, so G = {0 | 1/2}. A bit of work should convince you that G + G + (-1/2) = 0 (i.e. is a second-player win). So let’s define 1/4 = {0 | 1/2}.

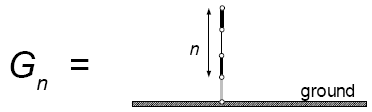

More generally, let’s consider the following game:

We would like to label . To do that, we need to show that

for n = 1, 2, 3… . The proof will be carried out by induction.

Proof. Suppose we already know that for n = 1, 2, … t-1. Then for n = t, we have:

It is easy to see that (it’s not that hard, so we’re leaving it as an exercise) so the game simplfies to

. Hence it remains to show that:

Note that . To prove that the above game is a second-player win:

- Suppose Left starts.

- If Left takes

, then Right can take

, thus leaving the sum 0 and win.

- If Left takes

, then Right takes

. By induction hypothesis,

so the game is now

. Right wins.

- If Left takes

- Suppose Right starts.

- If Right takes

, Left takes

, thus leaving 0 and win.

- If Right takes

, then Left takes

also. This gives

which is clearly positive. So Left wins.

- If Right takes

This completes the proof. ♦

In conclusion, we may label . Formally, we get the following recursive definition:

1/2 ={0 | 1}, 1/4 = {0 | 1/2}, 1/8 = {0 | 1/4}, 1/16 = {0 | 1/8}, …

More Fractions

Now that we’ve successfully defined fractions of the form 1/2, 1/4, 1/8, 1/16, … , we can now define any rational number of the form via addition and subtraction. We call such rational numbers dyadic rational numbers.

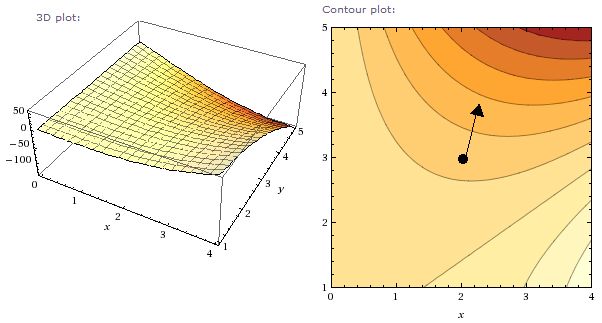

Now, one is inclined to naïvely think that we can just take averages: . Unfortunately, this is not true in general as the following examples illustrate.

Examples

- Consider the game G = {-5 | 2}. If Left starts, he leaves a negative number and Right wins. If Right starts, he leaves a positive number and Left wins. So the 2nd player wins! This means G = 0.

- Consider G = {1/4 | 1}. We claim G = 1/2. Indeed, we just need to show G – 1/2 = G + {-1|0} is a 2nd-player win. This is easy (try it!).

Despite this minor setback, we can take averages in some cases.

If p is an integer and m is a non-negative integer, then:

Proof. First consider the case 2p+1 > 0. Write the RHS (right-hand-side) as a sum of (2p+1) terms, each equal to . Thus, in this game sum, if Left starts, he has no choice but to play

. Likewise, if Right starts, his only move is

. By considering these options, we get the game

which is precisely the LHS. The case where 2p+1 < 0 is similar and is left to the reader.♦

Simplicity Rule

As mentioned earlier, we cannot take averages in a game to simplify it to a number. Due to this subtlety, we need the notion of simplicity:

We introduce an ordering among the set of dyadic rational numbers: the notion of simplicity. This ordering is “partial” in the sense that for some dyadic rational r, s, we are unable to say whether r<s or s>r.

- If r has a smaller denominator than s (in reduced fractions), then r is simpler than s.

- Among integers, if |a| < |b|, then a is simpler than b.

- 0 is the simpler than any r.

Examples

- -2 is simpler than 3 by criterion 2.

- 5/2 is simpler than 1/4 by criterion 1.

- 7/8 and 1/8 cannot be compared; neither is simpler than the other.

- 4/8 = 1/2 is simpler than 3/8 by criterion 1.

Now we have the following theorem.

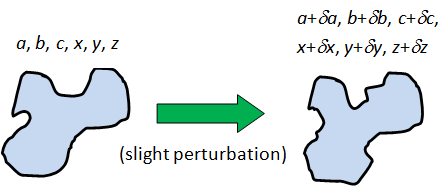

Theorem (Simplicity Rule). Suppose G is a game

G = {a, b, c, … | x, y, z, … }

whose options a, b, c, …, x, y, z, … are all numbers. If there is an r such that a<r, b<r, c<r, … and r<x, r<y, r<z, … then G is also a number, and is equal to the simplest dyadic rational r satisfying these inequalities.

As you can tell, this is a highly non-trivial result. The proof will come in three steps:

- There exists a simplest dyadic rational r satisfying the inequalities, in the sense that: you cannot find a number s which is simpler than r such that the inequalities hold.

- The r found in step 1 satisfies G = r.

- Hence such an r must be unique. [ Note that step 1 does not imply that r is unique, since the ordering is only partial. ]

Examples

- {-2 | 5} = 0. [ Alternatively : the second player wins so it’s a zero game. ]

- {1/2 | 17/4} = 1.

- {1/4 | 13/16} = 1/2.

- {-1/4 | -1/16} = -1/8.

- {17/2 | } = 9.

- {-17/2 | } = 0.

Proof of Simplicity Rule

Before start the proof let’s use the following observation.

Let G be a game equal to some dyadic rational number r. Then we can write G as one of the following forms:

- {m | n}, where m < n are numbers simpler than r, and m < r < n;

- {m | }, where m is a number simpler than r and m < r;

- { | n}, where n is a number simpler than r and r < n;

- { | }.

Why is this true? Well, 0 goes to case (d). If n is a positive integer, then we already know n = {n-1 | } and –n = { | -(n-1)} which are in cases (b) and (c). Finally, any dyadic non-integer can be written as for some integer p and positive integer r. But we saw earlier that

.

Now let’s follow the above 3 steps. Recall that:

,

where the options a, b, c, …, x, y, z, … are all numbers.

Step 1. This is easy: consider the set of all dyadic rational numbers r satisfying the inequalities a<r, b<r, c<r, … and r<x, r<y, r<z, … . Since the denominator of each r is finite, there must be an element with a smallest denominator (though possibly non-unique). If the smallest denominator is 1 (i.e. r is an integer), break ties by picking the one with the smallest absolute value.

Step 2. We need to show that G + (-r) is a 2nd player win. Suppose Left starts. Since any Left option is less than r, moving in the G-component would result in a negative value (Right wins – bad). On the other hand, suppose he moves in the second component (-r). By the above observation, we may assume the move is (-r) → (-n), where n is simpler than r and r<n. By our choice of r, it follows that either a≥n for some Left option a of G, or n≥x for some Right option x of G.

But n > r > a so the first case is out. We thus have n≥x and Right may win in the resulting game G+(-n) by picking the first component, thus leaving x-n ≤ 0. [ Recall that if a game G satisfies G≤0, then Right wins if Left gets to start first. ] In conclusion, if Left starts in G-r, Right wins.

One can show similarly that if Right starts, Left wins.

Step 3. This follows from the fact that no two distinct numbers are equal, even as games.

In a Nutshell

Here’s a recap of what we’ve learnt.

- Impartial games: 0, *1, *2, *3, … .

- Numbers: e.g. 1/2, 3/8, 7/16, -5/32, … .

- Simplifying a game by removing redundant options.

- How to simplify games to numbers using the simplicity rule.

Here’re some further notational simplifications: *1 is usually denoted as just *, while the game G+* is also denoted G*.

Exercises

- Jen considers the game G = {1/8 | 1} as well as G – 1/4. She reasons that if Left starts, he could move G → 1/8, leaving 1/8 – 1/4 < 0; and if Right starts, he could move G → 1, leaving 1 – 1/4 > 0. Thus, the 2nd player wins and so G= 1/4. However, this is wrong.

- Use the Simplicity Rule to find the actual value of G.

- Where did Jen’s reasoning go wrong?

- Prove that there are infinitely many games G, no two of which are equal, satisfying G + G = 1. Thus, 1/2 is not the only G satisfying this equality.

- Prove that for any positive integers n, r, we have

.

- Use the simplicity rule to simplify the following games to numbers.

- {-1/2 | 3/8 };

- {-5/2 | -1/2 };

- { 1/2 | 13/16 };

- {5/2 | }.

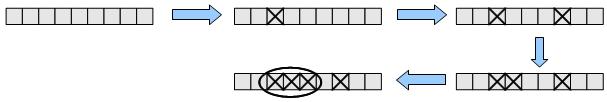

- Analyse the following Hackenbush positions and verify their values.

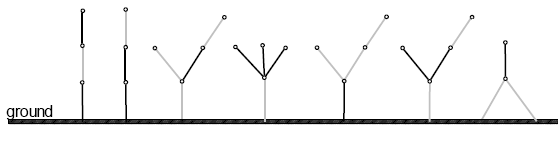

- Analyse the following Hackenbush positions and find their values.

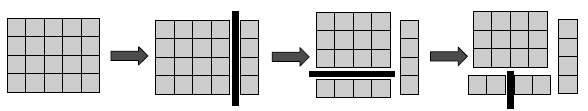

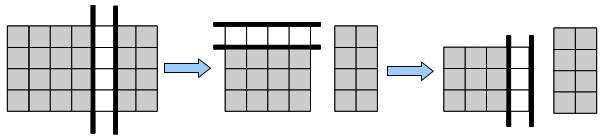

- Consider the game of Cutcakes. Given a cake, divided into m × n unit squares, each player at his turn takes a piece of cake and slices it along the edges of the squares, thus dividing the cake into two pieces. In addition, Left can only cut vertically, while Right can only cut horizontally. A typical game may proceed as follows if Left starts. Find the values for m × n Cutcakes, for 1 ≤ m, n ≤ 6.

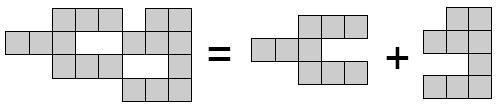

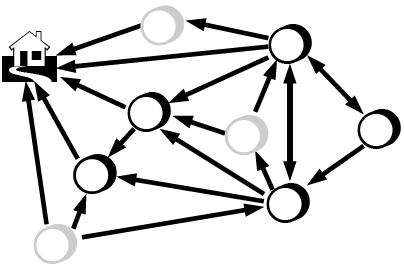

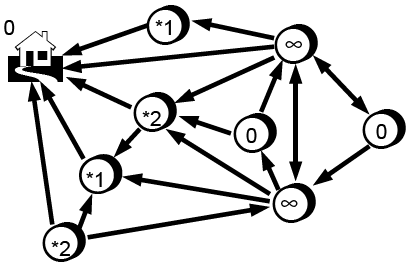

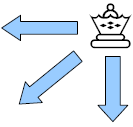

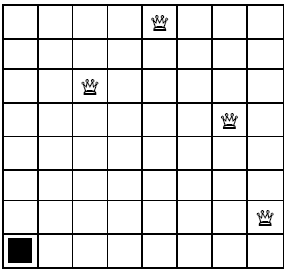

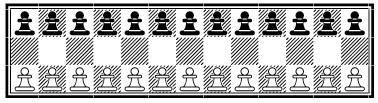

- Each of the following Domineering positions is either a number or a game of the form {m | n}, where m ≥ n are numbers. Compute them.