Previously, we defined continuous limits and proved some basic properties. Here, we’ll try to port over more results from the case of limits of sequences.

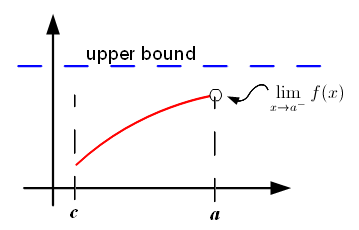

Monotone Convergence Theorem. If f(x) is increasing on the open interval (c, a) and has an upper bound, then

exists.

[ Recall that by “increasing”, we mean: if x ≤ y, then f(x) ≤ f(y). In particular, even a constant function is considered “increasing” under our definition. ]

Proof.

As before let L = sup{f(x) : c < x < a}, which is finite since f(x) has an upper bound. For each ε>0, since L-ε is not an upper bound, there exists δ>0 such that L-ε < f(a-δ) ≤ L. Hence, for any a-δ < x < a, we also have f(x) ≥ f(a-δ) > L-ε and f(a-δ) ≤ L. ♦

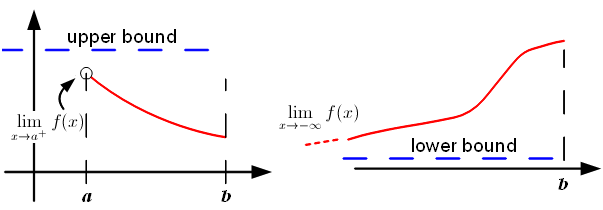

Note that this theorem has many variations. For example, f(x) can be either monotonically increasing or decreasing. Or the limit can be either left or right. Here’re two possibilities:

- If f(x) is decreasing on (a, b) and has an upper bound, then

exists.

- If f(x) is increasing on (-∞, b) and has a lower bound, then

exists.

Example

Define Now f is an increasing function and is bounded on (-1, 1) so the left and right limits

,

both exist. However, the left limit is -1 while the right limit is 0. Since both limits are not equal,

does not exist even though f is monotone and bounded.

Squeeze Theorem. Suppose

on the open interval (c, a). If

then

as well. This holds for the limits

and

as well.

Proof.

We’ll only prove the case for left limits. Let ε>0. Then there exist positive δ1 and δ2 such that:

- whenever a-δ1 < x < a, we have |g(x) – L| < ε;

- whenever a-δ2 < x < a, we have |h(x) – L| < ε.

Hence if δ = min(δ1, δ2), then whenever a-δ < x < a, we have f(x) ≤ h(x) < L+ε and f(x) ≥ g(x) > L-ε. Thus |f(x) – L| < ε. ♦

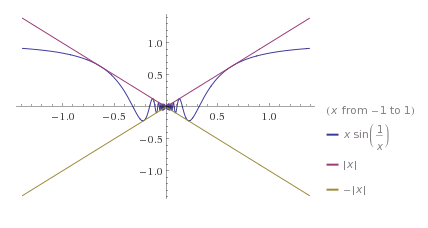

Example

Consider the function , for x≠0. Then:

, for all x≠0.

Since , it follows from the Squeeze Theorem that

.

(Graphs plotted by wolframalpha.com)

Continuous Functions: Basic Concepts

We wish to define what it means for a function to be continuous at x=a. It’s natural to define it in terms of limits.

Definition. Suppose f(x) is defined on an open interval (b, c) containing a. We say that f is continuous at a, if

.

Equivalently, we say that f is continuous at a, if for every ε>0, there exists δ>0, such that:

Example

For example, let’s consider .

Since , we see that f is continuous at x=0. ♦

The second definition is really neat, because now even if f(x) is not defined on an open interval about a, we can talk about continuity at a itself. To be explicit, suppose D is a subset of the reals R, and f : D → R is a function. If a lies in D, we say that f(x) is continuous at x=a if:

- for every ε>0, there exists δ>0, such that whenever |x–a|<δ and x lies in D, we have |f(x)-f(a)|<ε.

Example

Consider the function , which is defined on

.

Strange as it may sound, f(x) is continuous at x=0 according to our definition. Indeed, let’s check the definition. When someone throws an ε>0 at us, we have to produce a δ>0, such that if |x-0| = |x| < δ and x lies in D, then |f(x)-f(0)| = |f(x)-0.5| < ε. What can this δ be? It’s clear that δ=0.5 works for any ε(!), for the condition “|x| < 0.5 and x lies in D” is satisfied by one and only one point: namely, x=0, in which case |f(x)-f(0)|=0.

Strange as it may sound, f(x) is continuous at x=0 according to our definition. Indeed, let’s check the definition. When someone throws an ε>0 at us, we have to produce a δ>0, such that if |x-0| = |x| < δ and x lies in D, then |f(x)-f(0)| = |f(x)-0.5| < ε. What can this δ be? It’s clear that δ=0.5 works for any ε(!), for the condition “|x| < 0.5 and x lies in D” is satisfied by one and only one point: namely, x=0, in which case |f(x)-f(0)|=0.

It’s also clear from the proof that we’re at complete liberty to shift f(0) to any point we wish and the function is still continuous. This occurs due to an anomaly in the domain of definition D. As the point x=0 is too far from its neighbours, f(0) can take whatever the heck it wants without breaking continuity.

So when is f(a) uniquely determined by continuity?

Definition. Let D be a subset of R. A real number a is called a point of accumulation (or cluster point, or limit point) if for every ε>0, there exists

such that |x-a|<ε.

In short a is a point of accumulation if there are points of D which are arbitrarily close to it. E.g. in the above example , the point 0 is not a point of accumulation. On the other hand, -1 and +1 are points of accumulation even though they don’t lie in D. As a final example, for the set {1, 1/2, 1/3, … }, 0 is a point of accumulation.

The result we wish to prove is the following.

Definition. Let D be a subset of R and a be point of accumulation of D. If two functions

agree on D-{a} and are continuous at a, then f(a) = g(a).

Proof.

If not, let ε = |f(a)-g(a)|/2. Then there exist positive δ1 and δ2 such that:

;

.

Since a is a point of accumulation, we can pick an x in D such that 0 < |x–a| < min(δ1, δ2). This x satisfies f(x)=g(x) by condition, thus giving:

which is absurd. ♦

Further Properties

As the reader may suspect, we also have the following properties: if are both continuous at a, then

- f+g : D → R is continuous at a;

- fg : D → R is continuous at a;

- if f(a)≠0, then 1/f : D’ → R is continuous at a, where D’ is the set of x in D for which f(x)≠0.

The proof is extremely similar to the case of limits, so we’ll skip it for now. The conscientious reader may want to prove the above results to convince himself/herself, but we’ll prove them as a corollary in the next article by looking at higher-dimensional continuity.

As a consequence, since constant functions f(x)=c and the identity function f(x)=x are both continuous at every point in R, so is any polynomial function.

Exercises

- Prove that if a is not a point of accumulation of D, then any function

is automatically continuous at a.

- Prove that if f : (c, a] → R is a function, where (c, a] is the set of x, c < x ≤ a, then f is continuous at a if and only if

.

- Let f : D → R be a function. Prove that f(x) is continuous at a if and only if for every sequence (xn) in D which converges to a, the sequence f(xn) also converges to f(a).

[ Answer for 3 (highlight to read). (1) Suppose LHS holds and (xn) → a. For any ε>0, pick δ>0 such that when |x–a|<δ and x in D, |f(x)-f(a)|<ε. Now pick N such that when n>N, we have |xn–a|<δ. Hence when n>N, |f(xn)-f(a)|<ε. (2) Suppose f is not continuous at a. Take the negation of the definition of continuity: there exists ε>0 such that for any δ>0, we can find x in D, such that |x–a|<δ but |f(x)-f(a)|≥ε. Let δ=1/n, for n=1,2,3,… and pick xn such that |xn–a|<1/n but |f(x)-f(a)|≥ε. Prove that (xn) → a but f(xn) doesn’t converge to f(a). ]