Lindström–Gessel–Viennot Lemma

Let us switch gears and describe a beautiful combinatorial result. Suppose is a graph which is directed, has no cycles, and there are only finitely many paths from a vertex to another. Given sets of n vertices:

the lemma computes the “weighted sum” of non-intersecting paths from to

.

To be specific, suppose each edge of the graph has a weight

which takes values in a fixed ring. Given a directed path

from

to

let

be the product of the edges along the path

. Now write

for the sum of

over all

Next given above, we compute the determinant of:

On the other hand, consider a collection of paths where each

for some permutation

Furthermore we want the paths to be pairwise non-intersecting, i.e. no two paths contain a common vertex (not even at the endpoints). Now we can state the desired result.

Lindström–Gessel–Viennot (LGV) Lemma. We have:

over all tuples of paths

described above.

Proof of the LGV Lemma

First expand the determinant of ; each term is of the form:

Expanding each , the above term is a sum of terms of the form

summed over all n-tuples This is close to the desired result but here, the

may intersect. Hence, we wish to show that the sum over all

for which some

and

intersect, vanishes.

Let be the collection of all such paths; for each

we:

- let

be the first vertex occurring as an intersection between

and

(

), after taking a total ordering for the set of vertices;

- let

be the smallest index such that

contains

;

- let

be the largest index such that

contains

(note that

);

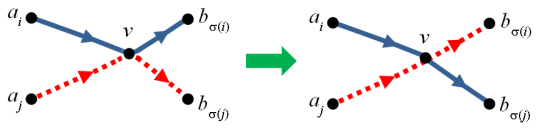

and get a triplet Now let

be the same tuple of paths but with the tails of

and

switched after

This gives a map , which is an involution since the corresponding triplet for

is still

However

and

have opposite signs. Denoting

by

we have

and so:

So the sum is zero. ♦

Jacobi-Trudi Identities

Here is our first application of the LGV lemma; all computations are in the formal ring

Theorem. Let

be the transpose of

. Then

is the determinant of either of these matrices.

where

is chosen to be

and

in the respective cases.

Note

One remembers the structure of the matrices by writing as the subscripts for the diagonal entries, then incrementing to the right. We take

for all

Observe that since

, there is no harm increasing

by appending zeros to the partition.

Proof

We can obtain the second identity from the first by applying , so let us show the first. Before we begin, observe that the Schur polynomial can be written as:

where Indeed, this follows from the proposition here.

We will use the LGV lemma: let be large; we take the following graph.

- The vertices are lattice points in

.

- The starting and ending vertices are given by:

- The edges are of one unit, directed either eastwards or southwards. The southward edges are weighted 1 while the eastward edges on

are labelled

for

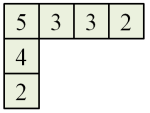

For example if , here is an example of paths connecting

Let us compute the LHS of the LGV lemma. The set of paths from to

sums up to the complete symmetric polynomial

. Thus the LHS is the desired determinant expression.

On the other hand, let us consider the collection of non-intersecting paths from to

. For a path to exist,

must be the identity. Each collection of non-intersecting paths corresponds to a reverse SSYT of shape

(i.e. the rows are weakly decreasing and columns are strictly decreasing). E.g. the above diagram gives:

Hence, the RHS in the LGV lemma equals the sum of over all reverse SSYT

of shape

This is equal to the sum over all SSYT

of shape

(we take

to be the minimum and maximum elements of the table respectively and replace each

with

thus obtaining an SSYT). Thus the sum is

♦