[ Background required: basic knowledge of linear algebra, e.g. the previous post. Updated on 6 Dec 2011: added graphs in Application 2, courtesy of wolframalpha.]

Those of you who already know inner products may roll your eyes at this point, but there’s really far more than what meets the eye. First, the definition:

Definition. We shall consider , which is the set of all triplets (x, y, z) of real numbers. The inner product (or scalar product) between

and

is defined to be:

[ Note: everything we say will be equally applicable to , but it helps to keep things in perspective by looking at smaller cases. ]

The purpose of the inner product is made clear by the following theorem.

Theorem 1. Let A, B be represented by points and

respectively. If O is the origin, then

is the value

, where |l| denotes the length of a line segment l and θ is the angle between OA and OB.

Proof. It’s really simpler than you might think: just follow the following baby steps.

- Check that the dot product is symmetric (i.e. v·w = w·v for any v, w in

).

- Check that the dot product is linear in each term (v·(w + x) = (v·w) + (v·x) and v·(cw) = c(v·w) for any real c and v, w, x in

).

- From the above properties, show that 2v·w = v·v + w·w – (v–w)·(v–w).

- By Pythagoras, the RHS is

. Now use the cosine law. ♦

Next we wish to generalise the concept of the standard basis e1 = (1,0,0), e2 = (0,1,0), e3 = (0,0,1). The key property we shall need is that they are mutually perpendicular and of length 1. From now onward, we shall sometimes call the elements of vectors. Don’t worry too much if you’re not familiar with this term.

Definitions. Thanks to the above theorem, the following definitions make sense.

- The length of a vector v is denoted by |v| = √(v·v).

- A unit vector is a vector of length 1.

- Two vectors v and w are said to be orthogonal if their inner product v·w is 0.

- A set of vectors is said to be orthonormal if (i) they are all unit vectors, and (ii) any two of them are orthogonal.

- A set of three orthonormal vectors in

is called an orthonormal basis.

[ In general, any orthonormal set can be extended to an orthonormal basis, and any orthonormal basis has exactly 3 elements. We won’t prove this, but geometrically it should be obvious. Hopefully we’ll get around to abstract linear algebra, from which this will follow quite naturally. ]

Our favourite orthonormal basis is e1 = (1,0,0), e2 = (0,1,0), e3 = (0,0,1).

In general, the nice thing about an orthonormal basis is that in order to express any arbitrary vector v as a linear combination v = c1e1 + c2e2 + c3e3, there’s no need to solve a system of linear equations. Instead we just take the dot product.

Theorem 2. Let {v1, v2, v3} be an orthonormal basis. Every vector w is uniquely expressible as w = c1v1 + c2v2 + c3v3, where ci is given by ci = w·vi.

Proof. Suppose w is of the form w = c1v1 + c2v2 + c3v3. Then we apply linearity of the dot product (see proof of theorem 1) to get:

Since the vi‘s are orthonormal, the only surviving term is . This proves the last statement, as well as uniqueness. To prove existence, let ci = w·vi and x = c1v1 + c2v2 + c3v3. We see that for i=1,2,3 we have:

so w – x is orthogonal to all three vectors {v1, v2, v3}. This contradicts the fact that we cannot have more than 3 vectors in an orthonormal basis of . ♦

[ Geometrically, the idea is to project w onto each of {v1, v2, v3} in turn to get the coefficients. ]

For example, consider the three vectors (1, 0, -2), (2, 2, 1), (4, -5, 2). They are mutually orthogonal but clearly not unit vectors. To fix that, we replace each vector v by an appropriate scalar multiple: v/|v|, so we get:

,

which is a bona fide orthonormal set. Now if we wish to write w = (1, 2, -3) as c1v1 + c2v2 + c3v3, we get:

Application 1: Cauchy-Schwartz Inequality

Square both sides of theorem 1 and obtain, for any two vectors v and w:

.

Writing v = (x, y, z) and w = (a, b, c), we obtain the all-important Cauchy-Schwarz inequality:

Cauchy-Schwarz Inequality. If x, y, z, a, b, c are real numbers, then:

.

Equality holds if and only if (a, b, c) and (x, y, z) are scalar multiples of each other.

Example 1.1. If a = b = c = 1/3, then we get the (root mean square) ≥ (arithmetic mean) inequality: for positive real x, y, z, we have

Example 1.2. Given that a, b, c are real numbers such that a+2b+3c = 1, find the minimum possible value of a2 + 2b2 + 3c2.

Solution. Skilfully choose the right coefficients in the Cauchy-Schwarz inequality:

to get our desired result: . And equality holds if and only if (a, b, c) is a scalar multiple of (1, 1, 1), i.e.

.

Example 1.3. Given that a, b, c, d are real numbers such that a+b+c+d = 7 and a2 + b2 + c2 + d2 = 13, find the maximum and minimum possible values of d.

Hint: [highlight start] Compare the sums a + b + c and a^2 + b^2 + c^2 using Cauchy-Schwarz inequality. Express it in terms of d. [highlight end].

Application 2: Fourier Analysis

Warning: this section is lacking in rigour, since our objective is to give the intuition behind it. It’s also rated advanced, as it’s significantly harder than the preceding text, and has quite a bit of calculus involved.

A common problem in acoustic theory is to analyse auditory waveforms. We can treat such a waveform as a periodic function , and for convenience, we will denote the period by 2π. Now the most common functions with period 2π are:

- constant function f(x) = c;

- trigonometric functions f(x) = sin(mx) and cos(mx), m = 1, 2, … ;

It turns out any sufficiently “nice” periodic function can be approximated with these functions, i.e.

This is called the Fourier decomposition of f. The main period 2π is called the base frequency of the wave form while the higher multiples 4π, 6π, … are the harmonics. In the Fourier decomposition, one can approximate f(x) by dropping the higher harmonics, just like we can approximate a real number by taking only a certain number of decimal places.

So how does one compute the coefficients and

? For that, we consider the simple case where f is a linear combination of sin(x), sin(2x), sin(3x), i.e. we assume:

, where

.

Let V be the set of all functions of this form. We can think of V as a vector space, similar to

via the following bijection:

.

So given just the waveform of f, how do we obtain a, b and c? The answer is surprisingly simple: if we take the inner product in V via:

then the functions sin(x), sin(2x), sin(3x) are orthogonal! This can be easily verified as follows: for distinct positive integers m and n, we have

However, they’re not quite orthonormal because they’re not unit vectors, Specifically, we have:

.

In summary, we see that

,

,

form an orthonormal basis of V, under the above inner product.

Now given any function f in V, we can recover the values a, b and c by taking the inner product:

Main Theorem of Fourier Analysis

Suppose f is a 2π-periodic function such that f and df/dx are both piecewise continuous. [ A function g is piecewise continuous if and

both exist for all

. ] Then we can approximate f as a linear combination:

where , and for n = 1, 2, 3, …, we have

,

. The above approximation means that for any real a, the RHS converges to

. In particular, if f is continuous at x=a, then the RHS converges to f(a) for x=a.

Example 2.1. Consider the function for

and repeated through the real line with a period of 2π. To compute its Fourier expansion, we have:

for any n since f(-x) = –f(x) almost everywhere (except at discrete points);

, using integration by parts.

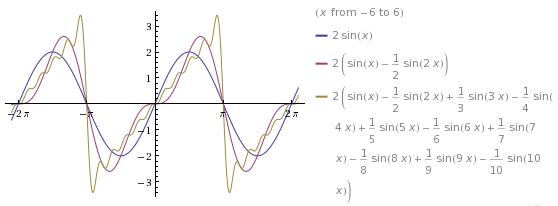

Thus we have and equality holds for

. Let’s see what the graphs of the partial sums look like.

If we substitute the value x = π/2, we obtain:

Important : at any , both the left and right limits of f(x) at x=a must exist. So we cannot take a function like f(x) = 1/x near x=0.

Example 2.2. Take for

and repeated with period 2π. Its Fourier expansion gives:

since f(x) = f(-x) everywhere.

.

, for n = 1, 2, … .

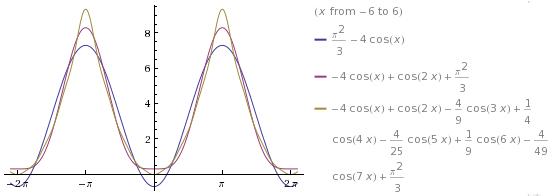

This gives . Now equality holds on the entire interval

since f(x) is continuous there. The graphs of the partial sums are as follows:

Substituting x=π gives:

.

Simplifying gives , which was proven by Euler via an entirely different method.

Example 2.3. This is a little astounding. Let for

, and again repeated with a period of 2π. The Fourier coefficients give:

.

.

.

So we can write , which holds for all

. In particular, for x = 0, we get the rather mystifying identity:

which you can verify numerically to some finite precision.