Determinant Modules

We will describe another construction for the Schur module.

Introduce variables for

. For each sequence

we define the following polynomials in

:

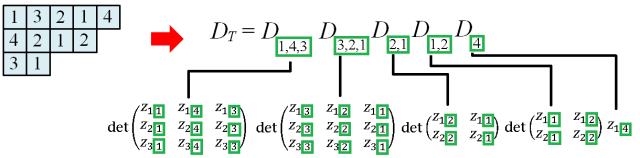

Now given a filling T of shape λ, we define:

where is the sequence of entries from the i-th column of T. E.g.

Let be the ring of polynomials in

with complex coefficients. Since we usually take entries of T from [n], we only need to consider the subring

.

Let Recall from earlier that any non-zero

-equivariant map

must induce an isomorphism between the unique copies of in the source and target spaces. Given any filling T of shape

, we let

be the element of

obtained by replacing each entry k in T by

, then taking the wedge of elements in each column, followed by the tensor product across columns:

Note that the image of in

is precisely

as defined in the last article.

Definition. We take the map

where

belongs to component

E.g. in our example above, is homogeneous in

of degree 5,

of degree 4 and

of degree 3. We let

act on

via:

Thus if we fix i and consider the variables as a row vector, then

. From another point of view, if we take

as a basis, then the action is represented by matrix g since it takes the standard basis to the column vectors of g.

Proposition. The map is

-equivariant.

Proof

The element takes

by taking the column vectors of g; so

where T’ is the filling obtained from T by replacing its entries with

correspondingly.

On the other hand, the determinant gets mapped to:

which is . ♦

Since contains exactly one copy of

, it has a unique

-submodule Q such that the quotient is isomorphic to

The resulting quotient is thus identical to the Schur module F(V), and the above map factors through

Now we can apply results from the last article:

Corollary 1. The polynomials

satisfy the following:

if T has two identical entries in the same column.

if T’ is obtained from T by swapping two entries in the same column.

, where S takes the set of all fillings obtained from T by swapping a fixed set of k entries in column j’ with arbitrary sets of k entries in column j (for fixed j < j’) while preserving the order.

Proof

Indeed, the above hold when we replace by

Now apply the above linear map. ♦

Corollary 2. The set of

, for all SSYT

with shape λ and entries in [n], is linearly independent over

Proof

Indeed, the set of these is linearly independent over

and the above map is injective. ♦

Example 1.

Consider any bijective filling T for . Writing out the third relation in corollary 1 gives:

More generally, if satisfies

and

, the corresponding third relation is obtained by multiplying the above by a polynomial on both sides.

Example 2: Sylvester’s Identity

Take the SYT by writing

in the left column and

in the right. Now

is the product:

In the sum , each summand is of the form

, where matrices M’, N’ are obtained from M, N respectively by swapping a fixed set of k columns in N with arbitrary sets of k columns in M while preserving the column order. E.g. for n=3 and k=2, picking the first two columns of N gives: