For today’s post, we shall first talk about the Catalan numbers. The question we seek to answer is the following.

Let n be a positive integer. Given a non-associative operation *, find the number of ways to bracket the expression

while preserving the order of the variables. For example, if n = 4, we have 5 possible expressions:

w*(x*(y*z)), w*((x*y)*z), (w*x)*(y*z), (w*(x*y))*z, ((w*x)*y)*z.

Write for the number of such expressions; the slightly shifted index is so that we get a nice expression in

. Using the technique of divide-and-conquer, it is not hard to obtain a recurrence relation in the sequence. Indeed, if we pick an expression with n+1 variables, it must be of the form: (expr1) * (expr2), where the two expressions have (say) i and n+1-i variables respectively, where i = 1, 2, …, n. Hence we have:

,

. (*)

This enables us to easily compute until pretty large values of n, so in practice it’s already quite good. But in this case, we can go even further by obtaining an explicit formula for

. Indeed, let

. Then the coefficient of

in

is precisely the expression on the right of (*). Hence we have:

Now we apply the generalised binomial expansion on . For n > 1, the coefficient of

in its Taylor expansion is:

=

.

Multiplying the numerator and denominator by (n-1)!, a bit of algebraic manipulation gives us the final answer: . Since the

are all positive, we have:

.

Note: the above computations all hold since the power series has a positive radius of convergence, also the generalised binomial theorem holds for all :

More Appearances of Catalan Numbers.

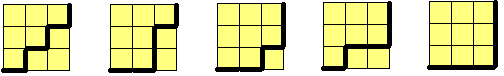

1. Consider an grid. We wish to count the number of paths from the southwest corner to the northeast along the edges of the grid which are (i) shortest, and (ii) remain below the line joining the southwest and northeast corner. E.g. when n=3, we have 5 possible paths:

The number of such paths is precisely .

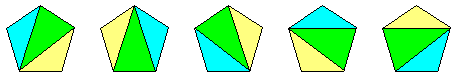

2. Fix a convex (n+2)-gon on a plane. The number of triangulations of the polygon is exactly . E.g. for n=3, we have 5 possibilities.

Formal Power Series

[ Important note: only the main definitions and properties are listed here, hopefully enough to give the reader a gist of the underlying theory. Loads of details have been omitted as including them would have spanned about ten pages or so. ]

As mentioned previously, we will also look at the case where we have power series of the form:

without explicitly substituting values in x. For instance, if , then the series doesn’t converge except at x = 0, but it still makes sense to talk about the series purely in notational form. We shall call such a power series a formal power series.

Definition. A formal power series is an expression of the form

, where each

is a real (or complex) number.

At the risk of appearing repetitive, we re-iterate that f(x) is merely a notation and we have no intention of letting x take any value. In fact, we could have just as easily expressed it as an infinite-tuple: without any loss of data. However, the power series notation appeals more to our intuition due to the definitions which are about to follow.

Definitions. Let

,

be any two formal power series. Then their sum and product are power series defined respectively as follows:

,

,

where

and

.

Let’s go back to the example of which fails to converge for any non-zero x. Despite this, we can consider f(x) as a formal power series and take its square:

. Note that we can calculate any coefficient as we please, but there doesn’t seem to be a nice closed formula for the general coefficient.

It’s not hard to show that the standard arithmetical properties hold under sum and product: for any formal power series f, g and h, the following hold:

- commutativity:

,

;

- associativity:

,

;

- distributivity:

;

- identity: the ‘0’ polynomial satisfies

, the ‘1’ polynomial satisfies

;

- no non-zero zero divisors: (#) if

, then f or g is zero (this will prove to be important later).

Also, addition has an inverse: namely, for any formal power series f, there is a unique power series g such that f+g = 0 (the zero-polynomial). We will denote this g by (-f). It’s easy to see that the each coefficient of g is the negation of the corresponding coefficient of f. Subtraction of formal power series is then defined by .

To recap, we have just defined addition, multiplication and subtraction on the set of all power series. [ For those who know abstract algebra, we’ve just defined a ring. ]

The next question is of interest:

When is there a multiplicative inverse of f(x)? In other words, for which f can we find a g for which

?

The answer may surprise you: a formal power series has a multiplicative inverse if and only if its constant term is non-zero. We’ll quickly sketch the proof: in one direction, this is easy: if then the constant terms of f and g must multiply to 1, so they are non-zero.

Conversely, suppose , where

. If

is its inverse, what would it look like? For starters, clearly

. Now suppose we have already found

; in order to compute the next coefficient, we need

, which gives:

Hence, we can define the ‘s inductively from

onwards. It follows quite easily from our definition that the resulting power series g satisfies

.

Ok, we’re done with the definition of arithmetic operations on formal power series. Our next definition is the derivative.

Definition. The formal derivative of a formal power series

is defined to be the formal power series:

.

The relationship between the arithmetic operations and the formal derivative is precisely what we expect: if f and g are formal power series, then

;

;

- if f is invertible, then

(exercise: prove it, try to make use of the first two properties and avoid dealing with the explicit coefficients).

Finally, the following definition is quite important.

Let f(x) and g(x) be formal power series, where g has constant term zero and

. The composition f(g(x)) is defined to be:

.

Note that to calculate the coefficient of

in

, one only needs to take the first n terms

.

Quick exercise : what do we require g to have no constant term? The important properties for power series composition are:

;

;

;

(chain law of derivative).

Now, armed with the theory of formal power series, we can define the usual power series formally without worrying on the convergence:

;

;

;

;

- for real r,

.

Upon this definition, we find that our usual trigonometric / exponential identities hold. So our intuition carries over:

Example 1 : for any real r and s, .

Sketch of proof : First, show that any power series satisfying is a constant multiple of exp(x) (left as an exercise; hint: find a recurrence relation among the coefficients of f). Now let

. Then its derivative satisfies, by the sum and product formulae:

.

Hence, satisfies

and g is a multiple of exp(x). Since g has no constant term, it must be 0. ♦

Example 2 : .

Sketch of proof : (using complex numbers). Write and

, where

. Use example 1. ♦

Example 3 : for any real r and s, .

Sketch of proof : let . It’s easy to show that any power series satisfying

is a constant multiple of

(left as an exercise). Hence, the derivative of f is:

so f is a constant multiple of . Since the constant term of f is zero, we have f = 0.

Exercise: prove that composition gives .

Having established all this background, one can now work out the arguments for the derivation of Catalan numbers by looking at formal power series alone. However, there’s one slight caveat we need to address: we found the quadratic equation satisfied by P and concluded that P can be obtained by the binomial expansion. While it’s true that the resulting binomial expansion does give Q such that

, how do we conclude that

?

Answer: upon completing the squares, we find that this boils down to the following deduction . In order to derive this, we simplify

. The fact that there are no non-zero zero divisors (#) then says R+S or R-S is zero.

Exercises

1. Obtain Binet’s formula for the Fibonacci sequence for

as follows.

- Define the formal power series

.

- Prove that we have

, as an equality of formal power series. Hence we get

.

- Use partial fractions to write

for some real constants a, b, r, s.

- As power series, we thus obtain

. Obtain Binet’s formula.

2. Let f be the formal power series for the sequence .

- Prove that the formal power series for the partial sums

is given by

.

- What is the formal power series for the sequence

,

?

- Express

in closed form.